StableIDM: Stabilizing Inverse Dynamics Model against Manipulator Truncation via Spatio-Temporal Refinement

StableIDM introduces a spatio-temporal refinement framework to stabilize inverse dynamics models against manipulator truncation through auxiliary masking, directional feature aggregation, and...

StableIDM: Stabilizing Inverse Dynamics Model against Manipulator Truncation via Spatio-Temporal Refinement

The Fragility of Robot Vision: The Truncation Challenge

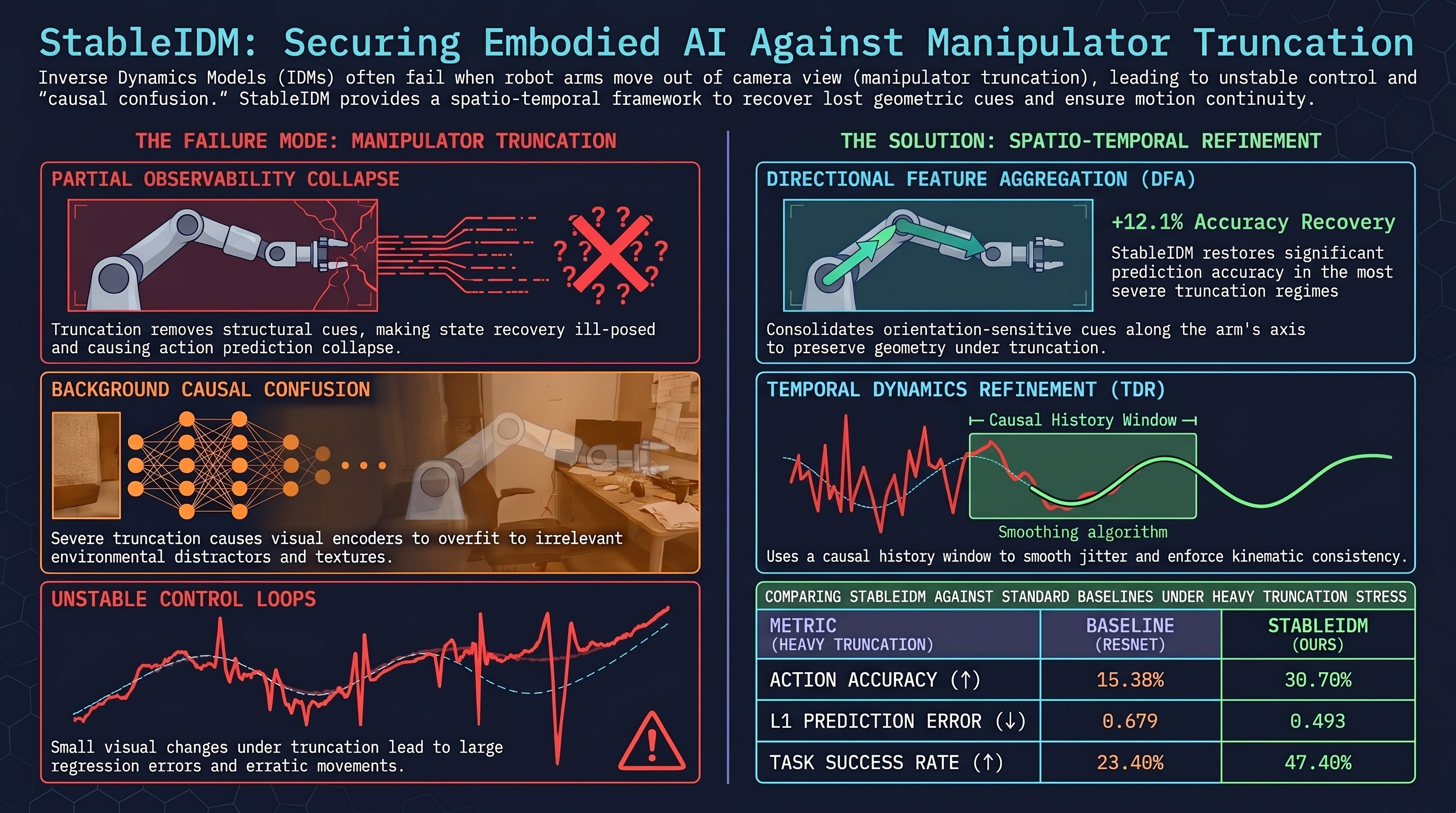

In the progression toward general-purpose embodied intelligence, a systematic failure mode remains pervasive yet insufficiently addressed: manipulator truncation. This phenomenon occurs when the robot arm moves partially out of the camera’s field of view, leaving only a short, disconnected segment visible. For standard Inverse Dynamics Models (IDMs)—which serve as the critical bridge mapping visual observations to executable actions—this truncation transforms a standard regression task into an ill-posed state recovery crisis.

When the majority of the manipulator vanishes from the frame, standard models lose the structural and geometric cues necessary to infer the arm’s full configuration. This leads to unstable control and catastrophic error spikes in action prediction. StableIDM addresses this fundamental challenge of partial observability through a synergistic spatio-temporal refinement framework designed to stabilize control even under severe truncation by explicitly “repairing” the visual and kinematic state.

Why Current Models Fail: Identifying the Core Weaknesses

Our analysis of standard IDM paradigms, which typically rely on single-frame regression (), identifies two architectural bottlenecks that lead to system collapse during truncation:

- Temporal Memorylessness: Single-frame models lack the historical context required to reconstruct the orientation of truncated links. Without a temporal window, the model cannot utilize previous motion trajectories to disambiguate the current position of hidden joints.

- Isotropic Spatial Aggregation: Standard Vision Transformer (ViT) backbones utilize global self-attention that mixes spatial information uniformly. This isotropic approach fails to preserve the fine-grained, directional cues of articulated segments. When the arm is partially visible, these models cannot isolate the specific geometric axis of the remaining manipulator segment, leading to “signal collapse” where the model fails to distinguish the robot from the background.

The StableIDM Architecture: A Synergistic Three-Stage Pipeline

To restore stability, StableIDM utilizes a “clean-repair-reason-smooth” flow. This pipeline leverages a fixed-length sliding window () to recover geometric cues without the risk of compounding errors often found in long-term state estimation.

Stage 1: Robot-centric Masking and Noise Suppression

The first stage addresses causal confusion—a phenomenon where high-capacity encoders overfit to background textures and stationary clutter rather than the arm’s kinematics. We utilize Segment Anything (SAM) as an auxiliary preprocessing step to generate a binary mask of the manipulator. By filtering out environmental distractors, we ensure the encoder’s representational capacity is allocated exclusively to the remaining visible geometry of the arm.

Stage 2: Directional Feature Aggregation (DFA)

Because truncation removes 3D structural cues, DFA treats the visible segment as a 2D geometric proxy.

- Anisotropic Reasoning: The module extracts features along four canonical directions spanning . This disentangles spatial information into directional components sensitive to the oriented edges of the robot’s links.

- Contextual Weighting: Adaptive directional weights are derived from the encoder’s CLS and register tokens via a Multi-Layer Perceptron (MLP). This allows the model to dynamically amplify the most reliable directional evidence (e.g., up-weighting horizontal extractors if the arm is truncated vertically).

Stage 3: Temporal Dynamics Refinement (TDR)

The TDR module provides sequential synergy by refining features both before and after spatial reasoning:

- Temporal Fusion (The Repair): Operating on visual feature maps, this module uses a learned visibility gate and a deformation field to “borrow” structural information from adjacent frames. This repairs the current incomplete feature map before it enters the DFA module.

- Temporal Regressor (The Smooth): After DFA produces a high-level descriptor, a causal Temporal Convolutional Network (TCN)—utilizing dilation factors of 1, 2, 4, and 8—analyzes the history of the last four frames. This enforces kinematic consistency and smooths out the jitters inherent in partially observed states.

Empirical Evidence: Stability Under Heavy Truncation

We benchmarked StableIDM against state-of-the-art baselines on the AgiBot World benchmark, a standardized stress test for partial observability. We define “Heavy Truncation” as a regime where the arm mask occupancy is less than 15% of the total pixels.

| Method | Truncation | Acc (Strict) ↑ | Mean Per-Dim Acc ↑ | L1 Distance ↓ |

|---|---|---|---|---|

| StableIDM (Ours) | Heavy (<15%) | 0.307 | 0.447 | 0.493 |

| Vidar | Heavy (<15%) | 0.186 | 0.322 | 0.573 |

| AnyPos (Single-View) | Heavy (<15%) | 0.159 | 0.306 | 0.538 |

| ResNet | Heavy (<15%) | 0.150 | 0.291 | 0.679 |

| StableIDM (Ours) | Light (>15%) | 0.286 | 0.476 | 0.142 |

| Vidar | Light (>15%) | 0.194 | 0.454 | 0.163 |

StableIDM achieved a 12.1% improvement in offline accuracy over the strongest baseline (Vidar) in the heavy truncation regime. Notably, while baselines degrade sharply as truncation increases, StableIDM maintains a stable L1 distance and accuracy profile.

Beyond the Lab: Real-World Replay and VLA Deployment

Physical robot experiments confirmed that offline stability translates to operational resilience. We tested the model on complex tasks including Microwave Operation (articulated doors) and Sink Cleaning (occlusions in recessed areas).

- Direct Execution (Open-Loop Replay): StableIDM achieved an average task success rate of 47.4%, outperforming Vidar (37.7%). The robustness is most evident in the “Sink Cleaning” task; while Vidar’s success rate crashes to 29.1% due to occlusion, StableIDM maintains 46.2%.

- Video-Plan Decoding: Acting as a policy decoder for synthetic visual plans, StableIDM achieved a 53.8% grasp success rate, proving its ability to smooth over the artifacts and “interpenetration” common in generated video plans.

- High-Fidelity Annotation (The Data Engine): StableIDM serves as a scalable labeling engine. Automatically annotating generated video data with StableIDM improved downstream Vision-Language-Action (VLA) task success by 17.6% compared to models trained solely on human-annotated data.

Conclusion: Bridging the Perception-Control Gap

StableIDM demonstrates that handling partial observability is not merely a vision problem—it is a control stability requirement. By integrating geometry-aware spatial reasoning with motion continuity constraints, we provide a robust path for reliable robot deployment in unconstrained environments.

Future work will explore multi-view extensions to further enhance geometric observability and the joint optimization of video generation and IDM modules to eliminate the representation mismatch between synthetic plans and real-world execution.

Key Takeaways

- The Vanishing Arm Problem: Truncation causes “signal collapse” in standard models; StableIDM uses Robot-centric Masking via SAM to prevent this background confusion.

- Geometric Recovery: By using 4 canonical directions and TCN dilations (1,2,4,8), the model repairs missing spatial data using temporal context.

- Unrivaled Robustness: StableIDM delivers a 12.1% accuracy boost in severe truncation and remains stable in tasks where baselines (like Vidar) drop to sub-30% success.

- Dual Utility: StableIDM is both a real-time policy and a high-fidelity data engine, bridging the gap between high-level generative plans and low-level physical execution.

Read the full paper on arXiv · PDF