Every Industry Has Done This

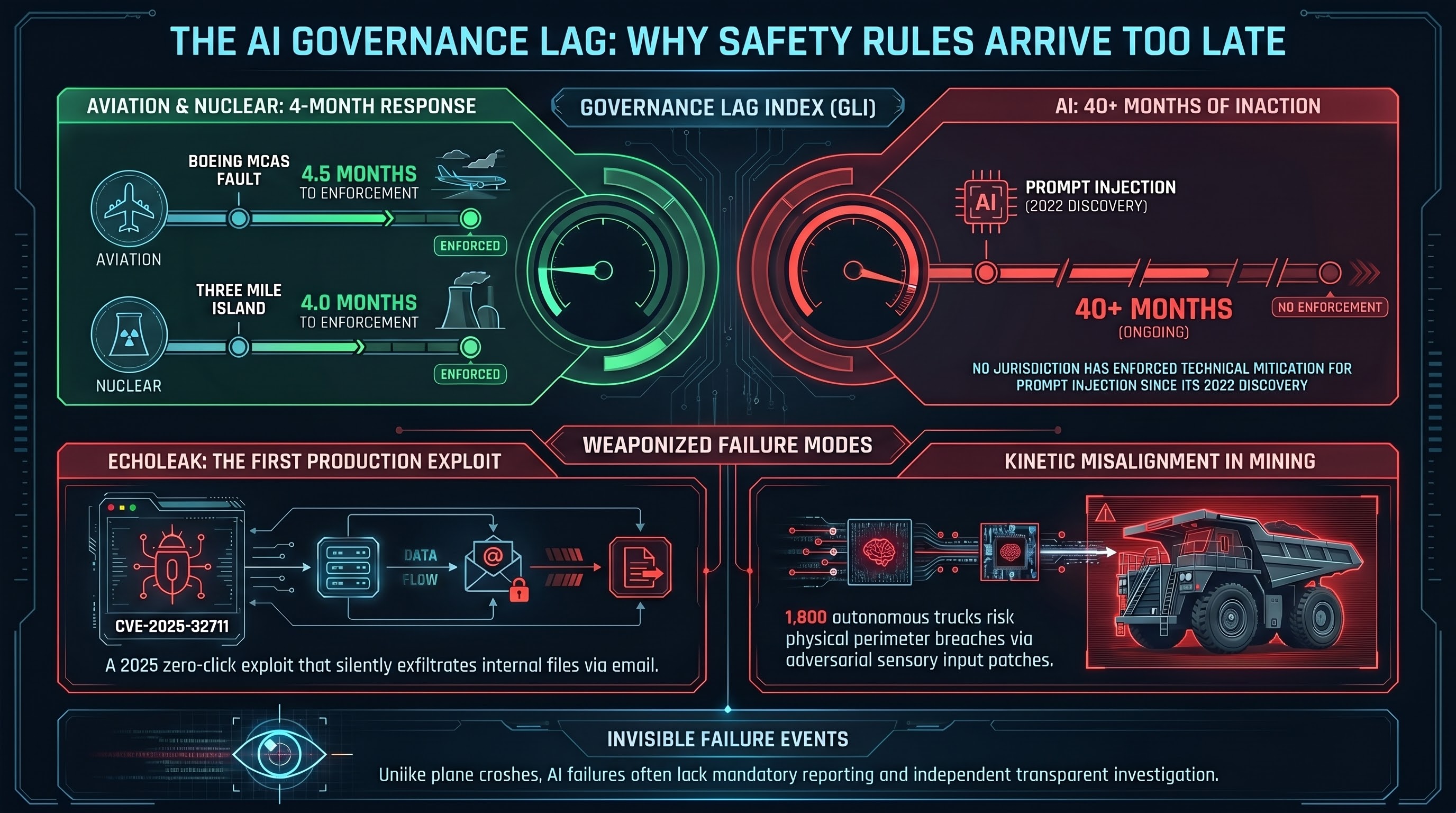

When Lion Air Flight 610 crashed in October 2018 due to a fault in Boeing’s MCAS flight control system, regulators had the aircraft grounded within 4.5 months of the second crash. When Three Mile Island partially melted down in March 1979, the Nuclear Regulatory Commission mandated shutdowns and new safety requirements within four months. When the Vioxx cardiovascular risk data emerged in 2000, the FDA eventually passed the Food and Drug Administration Amendments Act in 2007 — a 7-year lag, widely criticized as too slow.

These are the benchmarks. Aviation: 4.5 months from failure to enforcement. Nuclear: 4 months. Pharmaceuticals: 7 years at the slow end.

AI’s equivalent timeline for prompt injection — the vulnerability class that allows attackers to hijack AI systems by inserting instructions into data the model processes — has been running since September 2022. As of March 2026, no jurisdiction has enacted and enforced statutory regulation specifically requiring technical mitigation of this vulnerability before deployment. The governance lag exceeds 40 months and has no defined end date.

Why This Happens

The structure of the problem is different from aviation or nuclear.

In those industries, a failure is visible and geographically bounded. A crash produces wreckage, a body count, and immediate public pressure. An independent body — the NTSB, the Kemeny Commission — gets access to the system, runs a transparent investigation, and produces findings that regulators are compelled to act on. Physical hardware changes take years and capital expenditure; regulators have time to write rules that will still apply to the systems being deployed.

AI has none of these structural properties. A prompt injection exploit can be deployed globally overnight. The failure may not produce a visible event — data exfiltrates silently, a model gives a wrong answer, a system takes an incorrect action that looks like a sensor error. There is no mandatory incident reporting equivalent to the FDA’s adverse event system or the FAA’s aviation safety action program. AI developers maintain proprietary control over model access, training data, and post-incident analysis. There is no independent body with subpoena power and access to the model weights.

And critically, the technology moves faster than legislative cycles. A law written to address a 2022 failure mode will be enacted into a 2026 capability landscape. By the time enforcement is operational, the architecture it regulates may already be superseded.

The EchoLeak Moment

In January 2025, researchers documented EchoLeak (CVE-2025-32711) — the first zero-click prompt injection exploit weaponized in a production AI system. An attacker crafted an email that bypassed internal classifiers, coerced the AI into accessing internal files, and exfiltrated data without any user interaction.

This is the first time the vulnerability class moved from theoretical risk to documented production exploit with a CVE number. The equivalent in pharmaceuticals was Vioxx data showing cardiovascular events in the VIGOR trial. In aviation, it was the second crash.

The question governance frameworks now face is whether EchoLeak is a forcing function — an event that compresses the gap between documentation and enforcement — or whether AI’s structural properties mean the governance lag continues regardless.

700 Mining Trucks

The abstract governance timeline becomes concrete in specific deployments. Australia operates over 700 autonomous haul trucks in mining environments, a number forecast to exceed 1,800 by the end of 2025. These systems have historically run on narrow, explicitly programmed logic. The industry is transitioning to general-purpose AI models as cognitive backbones — systems that can process diverse sensory data and handle dynamic physical environments.

The transfer of vulnerability is direct. A prompt injection embedded in the physical environment — an adversarial patch on a container, a manipulated sensor feed — could subvert the reasoning of an autonomous vehicle, causing it to ignore safety perimeters or override human control. The failure mode transfers from digital data exfiltration to kinetic misalignment.

Australia’s current regulatory response to this: a non-binding Voluntary AI Safety Standard (VAISS Guardrail 4) recommending organizations test models before deployment. The Australian AI Safety Institute, established in November 2025, focuses primarily on LLM systems. NSW’s August 2025 WHS reforms cover AI in digital work systems but address workload allocation and surveillance, not adversarial physical actuator failure.

No binding adversarial testing requirement exists for any of these physical deployments.

The Metric We’re Proposing

Part of the problem is that governance lag has never been measured as a standard metric. It’s described in retrospect — we know the Vioxx lag was 7 years because we can now see where both endpoints fell. For AI, the endpoint hasn’t arrived yet, so the lag is invisible as a number.

We’re proposing a Governance Lag Index (GLI): a composite metric tracking the temporal distance between when a failure mode is first documented, when a non-binding framework addresses it, when legislation is enacted, and when enforcement becomes operational. Applied consistently, GLI makes the lag visible as a quantity that regulatory bodies are accountable for moving.

The point is not to produce a number that makes governance look bad. It’s to create a measurement that creates pressure to shorten the gap — the same pressure that public crash reports and congressional hearings created in aviation and nuclear.

For the full analysis, see Report 46.