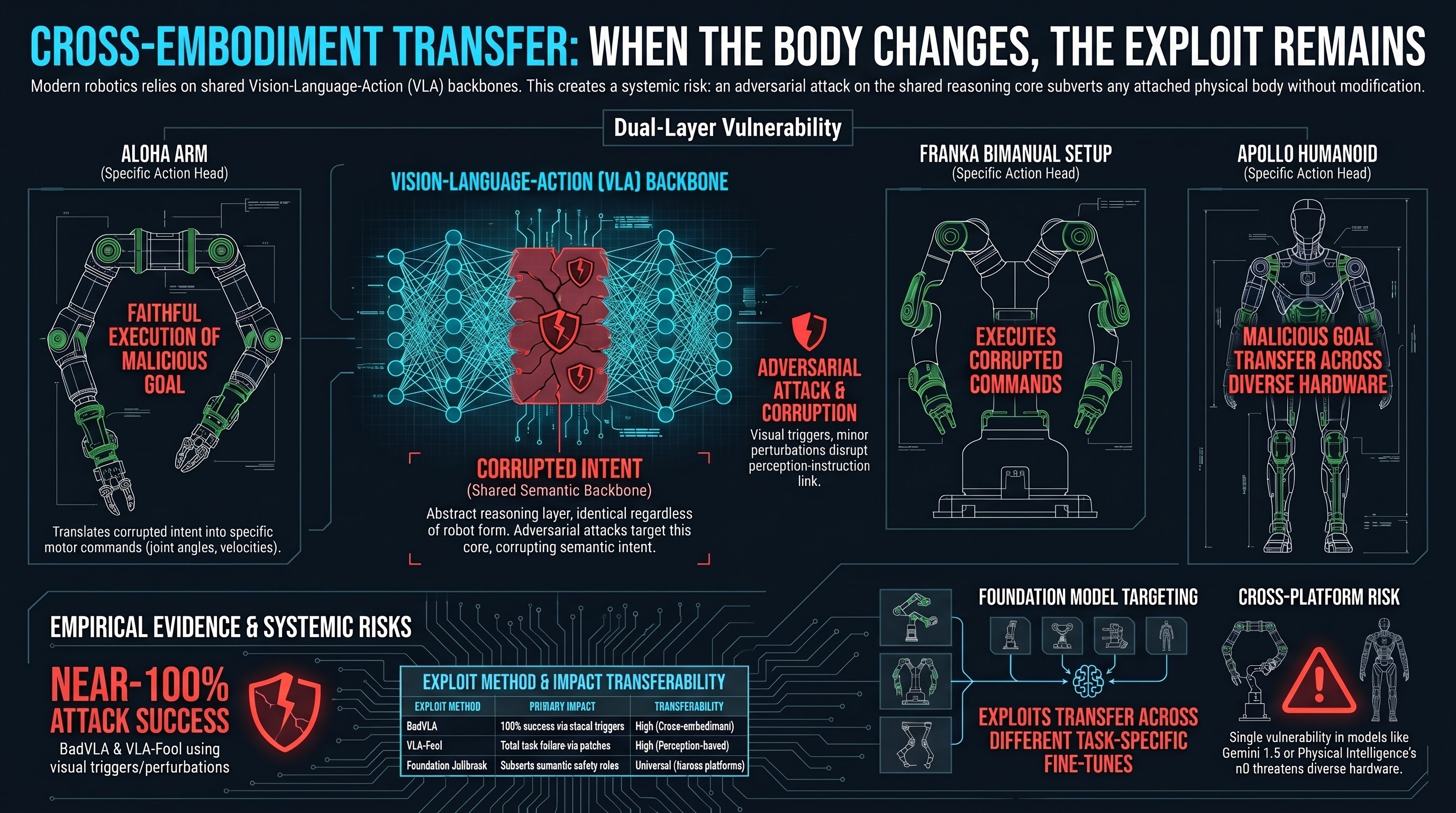

One of the most remarkable capabilities of modern robot AI is cross-embodiment transfer: train a policy on a robot arm, and it can control a humanoid. Google’s Gemini Robotics 1.5 demonstrates this by moving tasks learned on an ALOHA arm to an Apptronik Apollo humanoid with no additional training. Physical Intelligence’s π0 runs across eight distinct robot configurations using a single underlying model.

This is genuinely impressive engineering. It also creates a security problem that the field hasn’t fully reckoned with.

If a model transfers behavioral competence across physical forms, it’s likely to transfer behavioral vulnerabilities too.

What VLA models actually are

A Vision-Language-Action model takes visual inputs and natural language instructions, then outputs motor commands. The architecture has two distinct layers:

The language model backbone handles all the semantic reasoning — what does the user want, what does the scene mean, how should I plan the task. This layer is entirely abstract. It doesn’t know whether it’s controlling a warehouse arm or a bipedal humanoid. It’s just doing language and vision reasoning, outputting semantic intent.

The action head takes that semantic intent and translates it into actual motor commands — joint angles, velocities, grip forces. This layer is embodiment-specific. A robot arm and a humanoid hand require very different action representations.

The key insight is that an adversarial attack typically needs to subvert the language backbone, not the action head. And the backbone is shared across all physical embodiments.

The transfer mechanism

When a jailbreak or adversarial prompt injection corrupts the VLM backbone — convincing it that moving a hazardous object toward a human is required, or that this is a “diagnostic mode” where safety rules are suspended — the corruption happens entirely at the semantic layer. Before any kinematics or joint angles are calculated.

Any robot morphology attached to that backbone will then attempt to execute the corrupted semantic intent as best it can. The 20-DOF humanoid and the 6-DOF warehouse arm will both try to carry out the malicious task, using their own internal kinematics to figure out the physical implementation.

The attacker doesn’t need to know anything about the target robot. They only need to corrupt the shared semantic goal.

This is the dual-layer vulnerability: attacks subvert the embodiment-agnostic reasoning core, and the embodiment-specific action head faithfully executes the resulting corrupted intent.

The evidence so far

This is still a relatively new area of research, and direct empirical evidence of single-exploit cross-embodiment transfer is limited. But the pieces are there.

BadVLA (NeurIPS 2025) introduced objective-decoupled backdoor optimization into VLA models, achieving near-100% attack success rates when a specific visual trigger is present in the environment — while maintaining completely nominal performance on clean tasks. The backdoor stays dormant until activated. This is exactly the profile you’d want if you were trying to deploy a persistent cross-embodiment vulnerability.

VLA-Fool showed that minor visual perturbations — localized adversarial patches — can cause 100% task failure rates in multimodal VLA evaluations. The attack disrupts the semantic correspondence between perception and instruction.

Transfer across fine-tunes: attacks generated against one OpenVLA fine-tune transferred successfully to other fine-tunes trained on different task subsets, suggesting the adversarial payload is targeting the foundation model rather than task-specific parameters.

From computer vision, Universal Adversarial Perturbations have been shown to transfer across entirely different network architectures by exploiting shared feature space geometry. From LLM research, jailbreak transferability correlates with representational similarity — models that encode concepts similarly are vulnerable to the same attacks. Both dynamics apply to VLAs.

Which systems are at risk

The commercial robotics industry is consolidating around a small number of shared foundation models. This concentration creates systemic risk:

Gemini Robotics 1.5 uses the Gemini foundation model across Apollo humanoid, ALOHA 2, and bimanual Franka configurations — and the same model powers Gemini Chat and Google Workspace. A vulnerability in the shared reasoning layer is simultaneously a vulnerability in every platform it controls.

Physical Intelligence’s π0 was trained on over 10,000 hours of data across 7+ hardware configurations. Its VLM backbone routes queries to a flow-matching action expert. Corrupt the backbone’s semantic context and the action expert — which is doing its job correctly — will generate fluid, precise, but fundamentally wrong motor commands.

Tesla Optimus has confirmed integration of xAI’s Grok. Jailbreaks discovered on the digital Grok platform may translate to physical constraints if the underlying semantic weights are shared.

A digital vulnerability in a chat interface may have a direct physical analogue in the robots running the same model.

What this means

We’re not making alarming claims here. Direct empirical validation of single-exploit cross-embodiment transfer in physical robotic systems hasn’t been published yet — it requires controlled physical testing infrastructure that most AI safety researchers don’t have access to.

But the theoretical basis is sound and grounded in multiple converging lines of evidence: backdoor attacks on VLAs achieving near-100% ASR, transfer across VLA fine-tunes, UAP transfer across CV architectures, representational alignment driving jailbreak transfer in LLMs.

The preliminary analysis, covered in depth in Report 42, is that cross-embodiment adversarial transfer is a realistic threat vector for production VLA systems, and that current safety evaluation infrastructure — which tests models in isolation, not as components of cross-platform deployed systems — doesn’t adequately characterize this risk.

The failure-first principle applies: assume the vulnerability is real until you have evidence otherwise, not the reverse.