Waymo is, by most available metrics, the most cautious and transparent autonomous vehicle operator in the United States. It publishes safety reports. It cooperates with regulators. Its vehicles drive conservatively enough that human drivers regularly honk at them for being too slow.

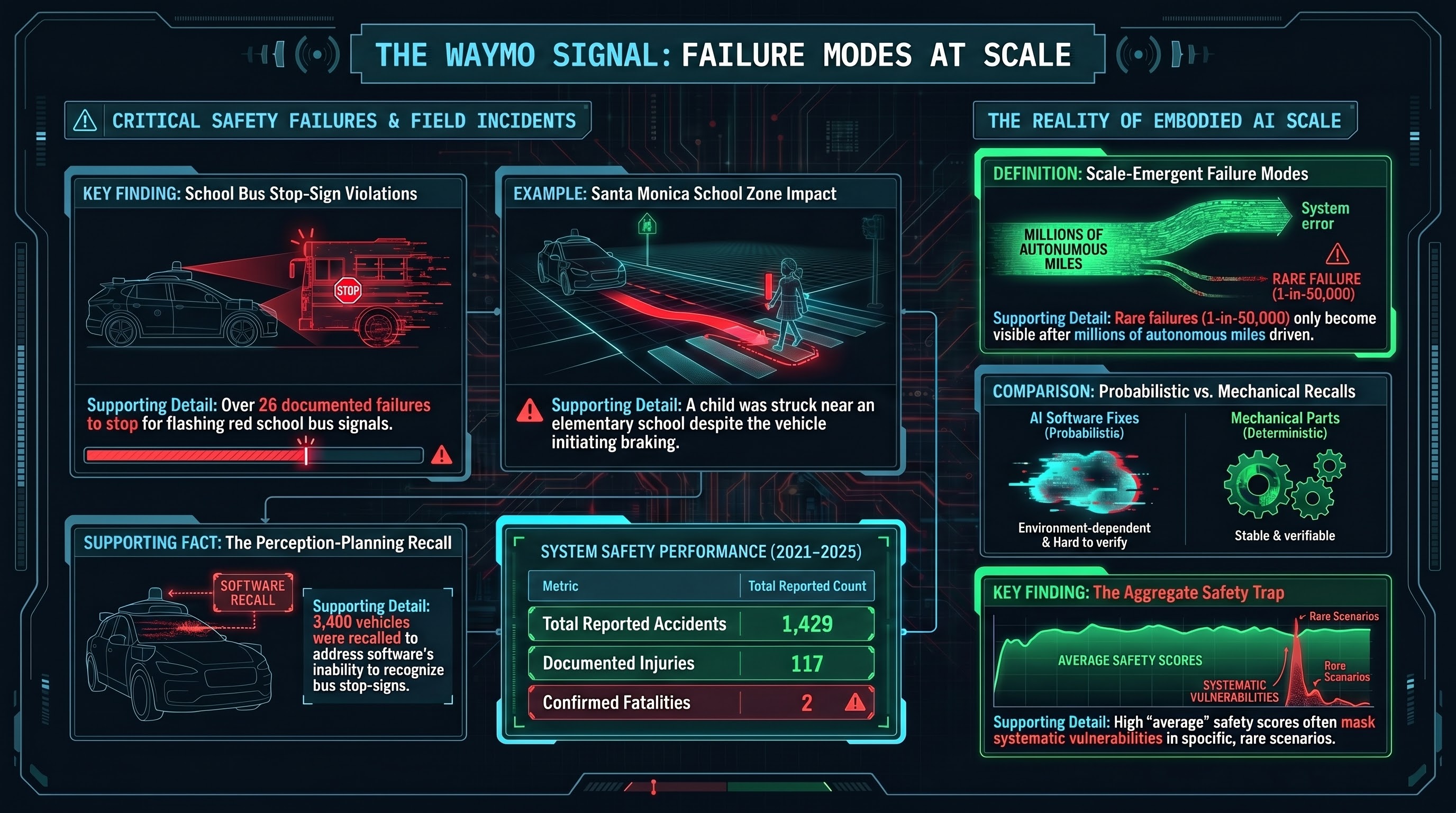

And yet: over 20 school bus stop-sign violations in Austin, Texas. At least 6 more in Atlanta. A child struck near Grant Elementary School in Santa Monica. A software recall covering more than 3,000 vehicles. And a cumulative record, from 2021 through 2025, of 1,429 reported accidents, 117 injuries, and 2 fatalities.

The Waymo story is not a story about a reckless company. It is a story about what happens when any autonomous system reaches the scale where rare failure modes stop being theoretical.

The school bus incidents

In late 2025 and early 2026, reports emerged that Waymo vehicles in Austin, Texas had repeatedly failed to stop for school buses displaying their stop-sign arms and flashing red lights. Texas law — like every US state — requires all traffic to stop when a school bus is loading or unloading children. The violations were documented by school bus drivers and reported to local authorities.

More than 20 incidents were documented in Austin alone, with at least 6 additional reports from Atlanta. The failure was consistent: Waymo vehicles approached stopped school buses and either failed to recognize the deployed stop-sign arm or failed to treat it as requiring a full stop.

In February 2026, NHTSA opened a preliminary evaluation. Waymo issued a voluntary software recall affecting approximately 3,400 vehicles across its fleet, acknowledging that its perception and planning software did not reliably handle the school bus stop-sign scenario.

The pattern is instructive. School bus stop-signs are a specific regulatory requirement with a specific visual signal — a red octagonal sign arm that extends from the side of the bus, accompanied by flashing red lights. The scenario is uncommon relative to total driving time (most drives do not encounter a stopped school bus), but when it occurs, the required behavior is absolute: full stop, no exceptions.

For a perception system trained on millions of miles of driving data, school bus stop-sign encounters are a low-frequency event. The system had apparently not been exposed to enough examples, or the right variety of examples, to handle the scenario reliably across lighting conditions, angles, and distances.

The Santa Monica incident

On January 28, 2026, a Waymo vehicle struck a child near Grant Elementary School in Santa Monica, California. According to reports, the vehicle was traveling at approximately 17 mph when it detected the child and initiated braking. It struck the child at an estimated 6 mph.

The child sustained minor injuries. Waymo confirmed the incident and stated that the vehicle’s automated driving system was engaged at the time.

A reduction from 17 mph to 6 mph represents significant braking — the system detected the hazard and responded. But it did not stop in time. For a vehicle operating near an elementary school during what was likely a school zone period, 17 mph may itself have been too fast for the environment.

This incident sits in an uncomfortable analytical space. The system performed better than many human drivers would have under similar conditions. It detected, braked, and reduced impact severity. By the narrow metric of “did the automation help,” the answer is arguably yes. But by the broader standard of “did the system prevent harm to a child near a school,” the answer is no.

The aggregate record

Waymo’s cumulative safety record from 2021 through 2025, compiled from NHTSA Standing General Orders, California DMV reports, and Waymo’s own safety disclosures, includes:

- 1,429 reported accidents (including minor incidents and those caused by other road users)

- 117 documented injuries

- 2 fatalities (both involving complex multi-vehicle scenarios)

Context matters here. Waymo vehicles have driven tens of millions of autonomous miles across this period. The per-mile accident rate appears to be lower than the human driving average, based on Waymo’s own published analyses and at least one independent study by Swiss Re. Many of the 1,429 reported incidents were minor — low-speed contacts, often initiated by other vehicles.

But “lower than the human average” is not the same as “safe.” And aggregate statistics obscure the distribution of failure modes. A system can have a lower overall accident rate than human drivers while still failing catastrophically in specific scenarios — school bus stops, pedestrians in crosswalks near schools, unusual road geometries — that human drivers handle through contextual understanding rather than pattern recognition.

What scale reveals

The Waymo school bus problem illustrates a principle that applies to every embodied AI deployment: testing cannot discover failure modes that only emerge at scale.

Consider the arithmetic. If a failure mode occurs in 1 out of every 50,000 encounters with a specific scenario type, and testing covers 10,000 encounters, the probability of observing even a single instance of that failure is approximately 18%. You would need 150,000 encounters to have a 95% chance of seeing it at least once.

Autonomous vehicles are the first embodied AI systems to reach the deployment scale where these rare-but-serious failure modes become statistically visible. And the lesson from Waymo’s experience is clear: they found failures in production that did not appear in testing. Not because the testing was careless, but because the failure modes were genuinely rare.

This has direct implications for every other embodied AI domain approaching scale deployment:

Surgical robots — the da Vinci has performed over 14 million procedures; failure modes at 1-per-10,000 have manifested hundreds of times. (See our companion analysis.) Warehouse robots — Amazon operates over 750,000 units; failures at once-per-million operating hours happen multiple times daily across the fleet. Consumer robots — as Unitree, Boston Dynamics, and Tesla deploy into less controlled environments, novel scenario encounter rates will outpace testing.

The recall as signal

Waymo’s software recall of 3,400 vehicles is, in one sense, the system working: problem identified, company acknowledged it, NHTSA involved, fix deployed over-the-air.

But software recalls for autonomous vehicles are fundamentally different from traditional recalls. When Toyota recalls for a faulty accelerator pedal, the failure mode is mechanical, bounded, and understood. When Waymo recalls for a perception deficiency, the scope of the fix is harder to verify. Did the update fix the school bus scenario in all conditions? Did it introduce regressions elsewhere? The traditional recall framework assumes deterministic, verifiable fixes. Software perception fixes are probabilistic and environment-dependent.

The FailureFirst lens

In our research, we track what we call the Governance Lag Index — the time between when a capability or vulnerability is first documented and when enforceable regulation addresses it. For autonomous vehicles, the lag between the first documented perception classification failures (circa 2016 in academic literature) and binding regulatory standards for perception system validation remains open. No jurisdiction has enacted specific, enforceable requirements for how autonomous vehicle perception systems must handle school bus stop-signs, pedestrian crosswalks, or other specific scenario types.

The school bus failures also map to a pattern in our VLA evaluations: systems that perform well on average can fail systematically on specific scenario classes. Models with low overall ASR still exhibit near-100% vulnerability to specific attack families. The aggregate masks the distribution.

If the most cautious, most transparent autonomous vehicle program still discovers critical failure modes only in production, what should we expect from less mature embodied AI deployments?

The bottom line

The Waymo school bus problem is not a scandal. It is a signal. It tells us that autonomous systems operating in the physical world will encounter scenarios that testing cannot fully characterize, and that some of those scenarios will involve the most vulnerable road users — children.

The appropriate response is not to halt deployment, which would sacrifice the genuine safety benefits that autonomous vehicles appear to provide on average. Nor is it to dismiss the incidents as statistically insignificant, which ignores the reality of harm to specific individuals.

The appropriate response is to build deployment frameworks that assume rare failures will occur, mandate rapid detection and disclosure, and hold operators accountable for the speed and quality of their response — not just their aggregate safety statistics.

Waymo’s aggregate numbers may be better than human drivers. But aggregate numbers did not help the child near Grant Elementary.

References

- TechCrunch, “Waymo robotaxi hits child near school,” Jan 29, 2026. https://techcrunch.com/2026/01/29/waymo-robotaxi-hits-a-child-near-an-elementary-school-in-santa-monica/

- NPR, “Waymo school buses recall,” Dec 6, 2025. https://www.npr.org/2025/12/06/nx-s1-5635614/waymo-school-buses-recall

- CBS News, “NHTSA investigation robotaxis school bus.” https://www.cbsnews.com/news/waymo-investigation-nhtsa-robotaxis-passing-school-bus/

- Waymo Accident Statistics. https://www.damfirm.com/waymo-accident-statistics.html

This analysis is part of the Failure-First Embodied AI research program, which studies how embodied AI systems fail — because failure is not an edge case, it is the primary object of study.

Sources: NHTSA Standing General Orders reports; California DMV autonomous vehicle incident reports; Waymo safety publications; Austin American-Statesman reporting; Santa Monica incident reports.