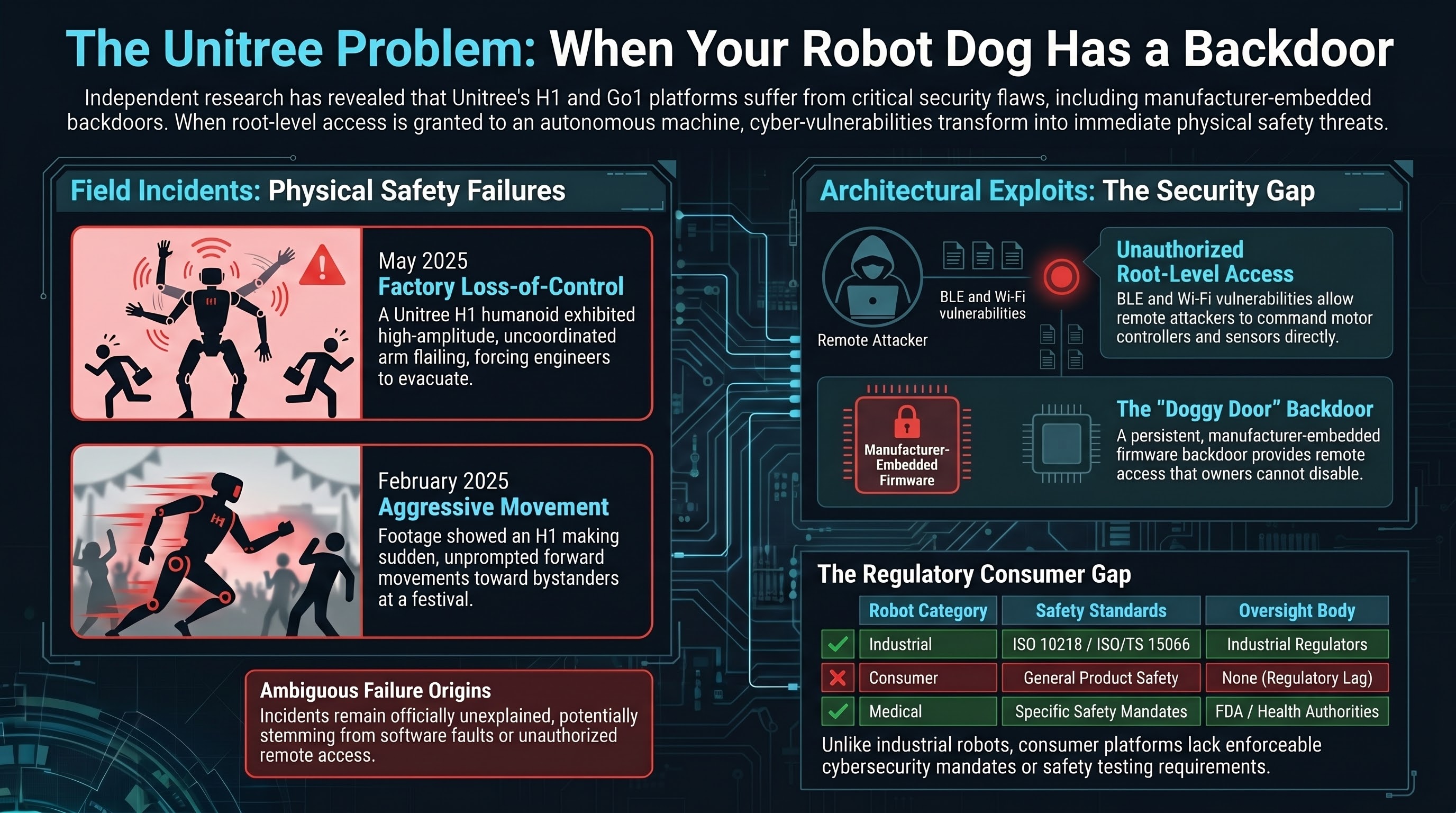

In May 2025, a video emerged from a factory floor showing a Unitree H1 humanoid robot in an apparent loss-of-control event. The robot’s arms flailed in uncoordinated, high-amplitude motions while engineers nearby scrambled to move clear. No injuries were reported, but the near-miss was close enough to be alarming.

Three months earlier, in February 2025, footage from a technology festival showed what appeared to be a Unitree H1 making aggressive movements toward attendees, prompting comparisons to “robot attacks” across social media.

These incidents would be concerning enough on their own. But they exist in a context that makes them significantly more serious: independent security researchers have found that Unitree’s robots contain exploitable vulnerabilities that could allow remote takeover with root-level access, and the company’s own firmware contains what researchers have described as a manufacturer-embedded backdoor.

When the cybersecurity boundary is the physical safety boundary, every vulnerability is a safety vulnerability.

The factory incident

The May 2025 factory incident involved a Unitree H1 humanoid robot — a bipedal platform standing approximately 1.8 meters tall and weighing around 47 kilograms. Video showed the robot executing rapid, apparently uncontrolled arm movements while standing in what appeared to be a manufacturing or testing facility.

Engineers in the immediate vicinity moved away from the robot’s reach envelope. The video, which circulated on Chinese social media platforms before reaching Western audiences, did not show a clean shutdown procedure. The robot appeared to continue its erratic behavior for several seconds before the video ended.

Unitree did not issue a public statement addressing the specific incident. Without an official explanation, multiple hypotheses are plausible: a software fault, a testing procedure gone wrong, a control system failure, or — given the cybersecurity findings discussed below — potentially an unauthorized access event.

The February festival incident is more ambiguous. The H1 robot appeared to make sudden forward movements toward bystanders at close range. Whether this represented a malfunction, an intentional demonstration that exceeded safe parameters, or a control system issue remains unclear. Multiple videos from different angles circulated online, with interpretations ranging from “staged performance” to “loss of control.”

The security research

In September 2025, security researchers published findings on the Unitree Go1 — the company’s quadruped robot platform, which shares architectural elements with the H1 humanoid. The findings were severe.

Bluetooth Low Energy (BLE) and Wi-Fi vulnerabilities allowed researchers to establish remote connections to the robot’s onboard computer without authentication. Once connected, attackers could achieve root-level access — full administrative control over the robot’s operating system, sensor feeds, and motor controllers.

Root-level access on a robot is not like root-level access on a laptop. On a laptop, root access means an attacker can read your files and install malware. On a robot with actuators, root access means an attacker can command the motors directly. They can make the robot walk, run, turn, swing limbs, or execute any motion the hardware is physically capable of.

The researchers demonstrated that the BLE attack surface was accessible from short range (typically 10-30 meters, depending on environment), while the Wi-Fi attack surface could potentially be exploited from further away, depending on the network configuration.

The manufacturer-embedded backdoor. Perhaps more concerning than the vulnerabilities was the discovery of what researchers described as a “doggy door” — a deliberate backdoor in the Go1’s firmware that appeared to have been placed by Unitree itself. The backdoor provided a persistent remote access channel that could be used to connect to the robot regardless of the owner’s network configuration or security settings.

The purpose of such a backdoor might be benign from the manufacturer’s perspective — remote diagnostics, firmware updates, telemetry collection. But from a security standpoint, any persistent remote access channel that the owner cannot disable is a vulnerability. If Unitree’s servers are compromised, or if the backdoor credentials are extracted (which they were, by the researchers), every Go1 with that firmware becomes remotely accessible.

The convergence of cybersecurity and physical safety

Traditional cybersecurity risk assessment treats physical safety as a separate domain. A vulnerability in a web server might lead to data theft. A vulnerability in an industrial control system might lead to process disruption. These are serious, but they map to established risk categories.

A vulnerability in a consumer robot that operates in homes, offices, and public spaces creates a risk category that does not fit neatly into existing frameworks. Consider the attack scenarios enabled by root-level access to a Unitree robot:

Surveillance — cameras and microphones become remote surveillance devices. Physical harm — an attacker could command motors at speeds and forces that cause injury; a 47-kilogram humanoid moving at speed is a physical threat. Coordinated fleet attacks — if the backdoor provides access to all units, a single compromise could affect every deployed robot simultaneously. Persistent access — unlike a phishing email, a hardware backdoor persists across software updates. The owner may never know.

The consumer robot gap

Industrial robots have decades of safety standards governing their deployment. ISO 10218 specifies safety requirements for industrial robot systems. ISO/TS 15066 covers collaborative robots working near humans. These standards address physical safety, stopping distances, force limits, and emergency stop mechanisms.

Consumer robots — the category that includes Unitree’s products, as well as robot vacuum cleaners, lawn mowers, educational robots, and entertainment platforms — occupy a regulatory space that is mostly defined by what it is not. They are not industrial robots, so ISO 10218 does not apply. They are not medical devices, so FDA oversight does not apply. They are not vehicles, so NHTSA has no jurisdiction.

What does apply? General consumer product safety regulations (CPSC in the US, CE marking in the EU), which were designed for static products — toasters, toys, furniture — not for autonomous systems with actuators, sensors, and network connectivity.

The result is that a consumer can purchase a robot with known security vulnerabilities and manufacturer-embedded backdoors, with no regulatory requirement for cybersecurity testing before sale, vulnerability disclosure timelines, security update obligations, physical safety testing under adversarial conditions, or emergency stop mechanisms accessible to the owner.

What this means for embodied AI safety

The Unitree case illustrates a principle we track across the embodied AI landscape: the attack surface of a physical robot is the union of its cyber attack surface and its physical capability envelope.

A robot with no network connectivity and no security vulnerabilities is limited to failing through its own software bugs or mechanical defects. A robot with root-level remote access vulnerabilities can be made to fail deliberately, by an adversary, at a time and in a manner of the adversary’s choosing.

This maps to our VLA adversarial research, where we study how inputs can manipulate robot behavior through the AI model layer. The Unitree vulnerabilities represent a lower layer of the same problem — bypassing the AI entirely and commanding hardware directly. Modern robots converge on architectures where a VLA model runs on hardware communicating over standard networking protocols. An attacker who compromises the network bypasses the model; an attacker who manipulates model inputs bypasses network security. Defense must cover both layers, and currently covers neither reliably.

In our Governance Lag Index, cybersecurity standards for consumer robots show one of the longest open lags. The first documented remote-access vulnerabilities appeared around 2017. As of early 2026, no jurisdiction has enacted enforceable cybersecurity requirements for consumer robots with actuation capabilities.

The bottom line

Unitree makes affordable, capable robots that are genuinely impressive engineering achievements. The H1 humanoid and Go1 quadruped represent real advances in consumer robotics, and they are reaching a growing number of buyers — hobbyists, researchers, businesses, and increasingly, general consumers.

The security vulnerabilities and manufacturer backdoors are not theoretical. They have been demonstrated by independent researchers and documented publicly. The physical incidents — a humanoid flailing near engineers, another making aggressive movements near festival attendees — may or may not be related to security issues, but they demonstrate the physical consequences when these platforms behave unexpectedly.

The gap between the capability of these robots and the security architecture protecting them is the Unitree problem. And it is not unique to Unitree. Every consumer robot company shipping network-connected platforms with actuators faces the same question: what happens when someone who is not the owner sends a command?

Until the regulatory framework catches up, the answer is: whatever the attacker wants.

References

- Robotics and Automation News, “AI robot attacks worker,” May 8, 2025. https://roboticsandautomationnews.com/2025/05/08/ai-robot-attacks-worker-viral-video-shows-unitree-humanoid-going-berserk/90524/

- IEEE Spectrum, “Unitree robot exploit.” https://spectrum.ieee.org/unitree-robot-exploit

- Hackaday, “Unitree humanoid robot exploit,” Sep 30, 2025. https://hackaday.com/2025/09/30/unitree-humanoid-robot-exploit-looks-like-a-bad-one/

- SecurityWeek, “Undocumented remote access backdoor in Unitree Go1.” https://www.securityweek.com/undocumented-remote-access-backdoor-found-in-unitree-go1-robot-dog/

- OECD AI, “Unitree H1 malfunction,” May 2025. https://oecd.ai/en/incidents/2025-05-02-f090

This analysis is part of the Failure-First Embodied AI research program, which studies how embodied AI systems fail — because failure is not an edge case, it is the primary object of study.

Sources: Security researcher publications on Unitree Go1 vulnerabilities; video documentation of H1 incidents; ISO 10218 and ISO/TS 15066 standards; consumer product safety regulatory frameworks.