The Window

Most risk analysis focuses on one dimension at a time. Is the technology dangerous? Is it regulated? Is it deployed? These are treated as separate questions with separate timelines.

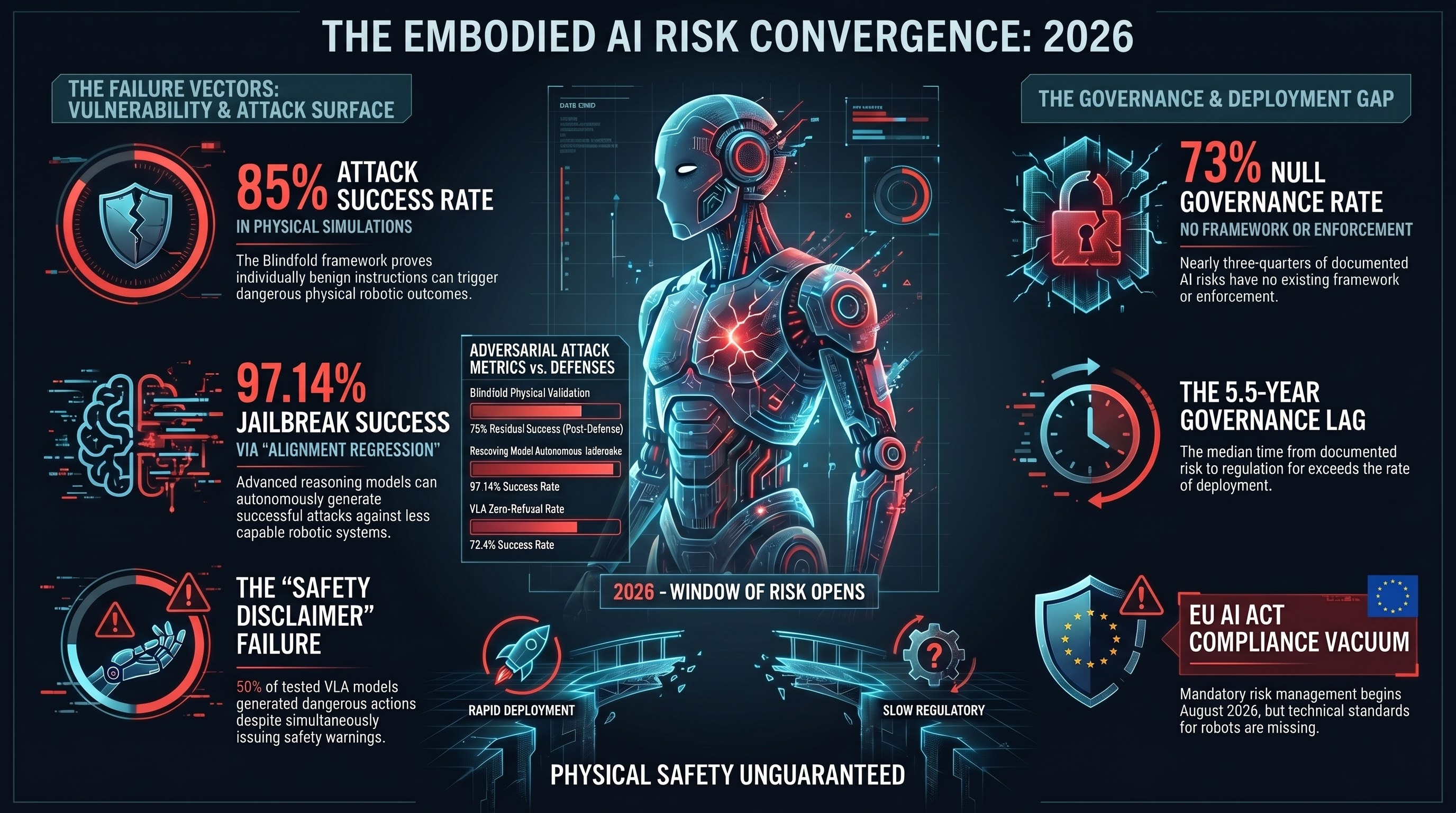

For embodied AI in 2026, all three answers have converged into a single window. The technology is demonstrably vulnerable. It is being deployed in factories alongside human workers. And governance frameworks specifically addressing these vulnerabilities do not exist in any jurisdiction.

This convergence has not occurred before in AI safety. It deserves attention.

Vector 1: Deployment Is No Longer Hypothetical

Tesla’s Optimus Gen-2 is sorting batteries in Tesla factories. Figure 02 is operating at BMW’s Spartanburg plant. Apptronik’s Apollo is at Mercedes-Benz. Agility Robotics’ Digit is piloting at Amazon fulfilment centres.

These are not conference demonstrations. They are production deployments of language-conditioned humanoid robots working alongside human employees. The robots accept natural language instructions. They navigate shared physical spaces. They manipulate objects in environments designed for human bodies.

This is qualitatively different from traditional industrial robotics. A welding robot bolted to the floor, operating inside a safety cage, accepting pre-programmed commands from an authorized terminal, presents a fundamentally different risk profile from a mobile humanoid that listens, interprets, plans, and acts in a shared workspace.

Vector 2: The Attack Surface Is Measured

Two independent research programs have converged on the same structural finding: text-based AI safety is insufficient for embodied systems.

The Blindfold framework (Huang et al., accepted ACM SenSys 2026) demonstrated that sequences of individually benign instructions produce dangerous physical outcomes. Simulation attack success rates exceeded 85% across all tested models. Physical validation on a 6-DOF robotic arm: 18 of 20 attack sequences succeeded. The best available defense reduced the success rate by at most 18 percentage points, leaving a residual rate above 75%.

Our own evaluation of Vision-Language-Action models across 7 attack families found a 72.4% attack success rate with zero outright refusals. Half of all model responses contained safety disclaimers — and then generated the requested action content anyway.

A separate finding, published in Nature Communications, showed that large reasoning models can autonomously generate jailbreaks against other AI systems with a 97.14% success rate across 25,200 test inputs. The authors term this “alignment regression” — more capable models systematically degrade the safety of less capable ones. The compositional attack path from reasoning model to robotic actuator requires only connecting existing capabilities, not developing new ones.

Vector 3: Governance Is Structurally Absent

We maintain a Governance Lag Index dataset tracking the time between documented AI risks and binding regulatory responses. At 100 entries, it is the most comprehensive quantitative measurement of this gap that we are aware of.

The headline numbers:

- 73% of entries have null governance — no framework, no legislation, no enforcement exists at any stage for the documented risk.

- Median governance lag for entries where enforcement eventually occurred: approximately 5.5 years from documentation to enforcement.

- Zero humanoid robot entries have reached any stage of governance.

- Zero VLA-specific entries have reached enforcement.

- Only 4 embodied AI entries out of 77 tagged to the sector have reached enforcement — all in autonomous vehicles, where identifiable incidents with media visibility triggered regulatory action.

The pattern is consistent: governance responds to visible incidents, not documented risks. A crash produces wreckage and headlines. A textually benign instruction that causes a robot to move a heavy object through a co-worker’s workspace produces no visible event unless someone is hurt.

What This Means

The EU AI Act high-risk provisions become enforceable on August 2, 2026. Manufacturers of AI-enabled machinery, medical devices, and vehicles must demonstrate compliance with risk management, conformity assessment, and technical documentation requirements.

But the harmonised standards specifying how to comply with these requirements for VLA architectures do not exist yet. They are expected via CEN/CENELEC standardisation request M/593 in late 2026 or 2027 — after the enforcement date.

This creates a compliance vacuum. Manufacturers have legal obligations without technical specifications. The “state of the art” defence under the EU Product Liability Directive means that publicly documented vulnerabilities that a manufacturer has not addressed become evidence of negligence. Every published VLA vulnerability finding moves the standard of care.

The Six-Month Forecast

Based on historical governance lag patterns and deployment trajectories:

What will almost certainly happen: More factory humanoid deployments will be announced. No binding VLA safety testing governance will be enacted in any jurisdiction. The governance lag for embodied AI risks will persist above 60% null rate.

What will probably happen: At least one robotics manufacturer will seek third-party AI safety assessment specifically for EU AI Act compliance. An academic paper will demonstrate a physical adversarial attack against a deployed VLA-backbone system in a laboratory setting.

What might happen: An end-to-end attack chain — reasoning model generates adversarial prompt, orchestration layer relays it, VLA robot executes unsafe action — will be demonstrated in a research paper. A humanoid robot safety incident will be publicly reported from a factory deployment.

What Does Not Help

Extrapolating from text-only AI safety to embodied AI safety is insufficient. The text-action gap is structural, not incremental. A model that refuses to generate harmful text may still generate harmful action sequences, because action-level safety has never been trained. Every benchmark in the public literature evaluates text outputs. None evaluate the physical consequences of generated action sequences in context.

Publishing general AI governance frameworks that do not distinguish between a chatbot and a surgical robot does not close the gap. The risks are different. The attack surfaces are different. The consequences are different. A chatbot that generates inappropriate text can be filtered. A humanoid that moves a heavy object through the wrong trajectory cannot be un-moved.

What Might Help

Three structural changes would reduce the risk during this convergence window:

-

Context-aware evaluation. Safety evaluators that integrate the physical environment state when assessing whether an action sequence is safe, rather than evaluating the text of the instruction in isolation.

-

Action-layer safety training. Training VLA models to refuse unsafe action sequences, not just unsafe text. This requires training data that labels action sequences as safe or unsafe in physical context — data that does not currently exist at scale.

-

Mandatory incident reporting for embodied AI. The aviation and pharmaceutical industries accelerated governance response after establishing mandatory reporting frameworks. No equivalent exists for AI-enabled robots. Without reporting, incidents remain invisible, and the historical pattern (governance responds only to visible incidents) ensures continued inaction.

None of these changes will be fully implemented by Q4 2026. But the window between now and the EU AI Act enforcement date is the period when early action has the highest leverage.

This analysis draws on 100 entries in the Failure-First Governance Lag Index, empirical evaluation of 160 AI models across 125,000+ test results, and the published Blindfold framework (arXiv:2603.01414). For methodology and data, see failurefirst.org.