Threat Horizon Digest: March 2026

This is the first monthly threat horizon digest from Failure-First. Each month, we synthesize the most consequential developments in embodied AI safety — not what happened this week, but what the data says is coming next quarter.

Three Developments That Matter

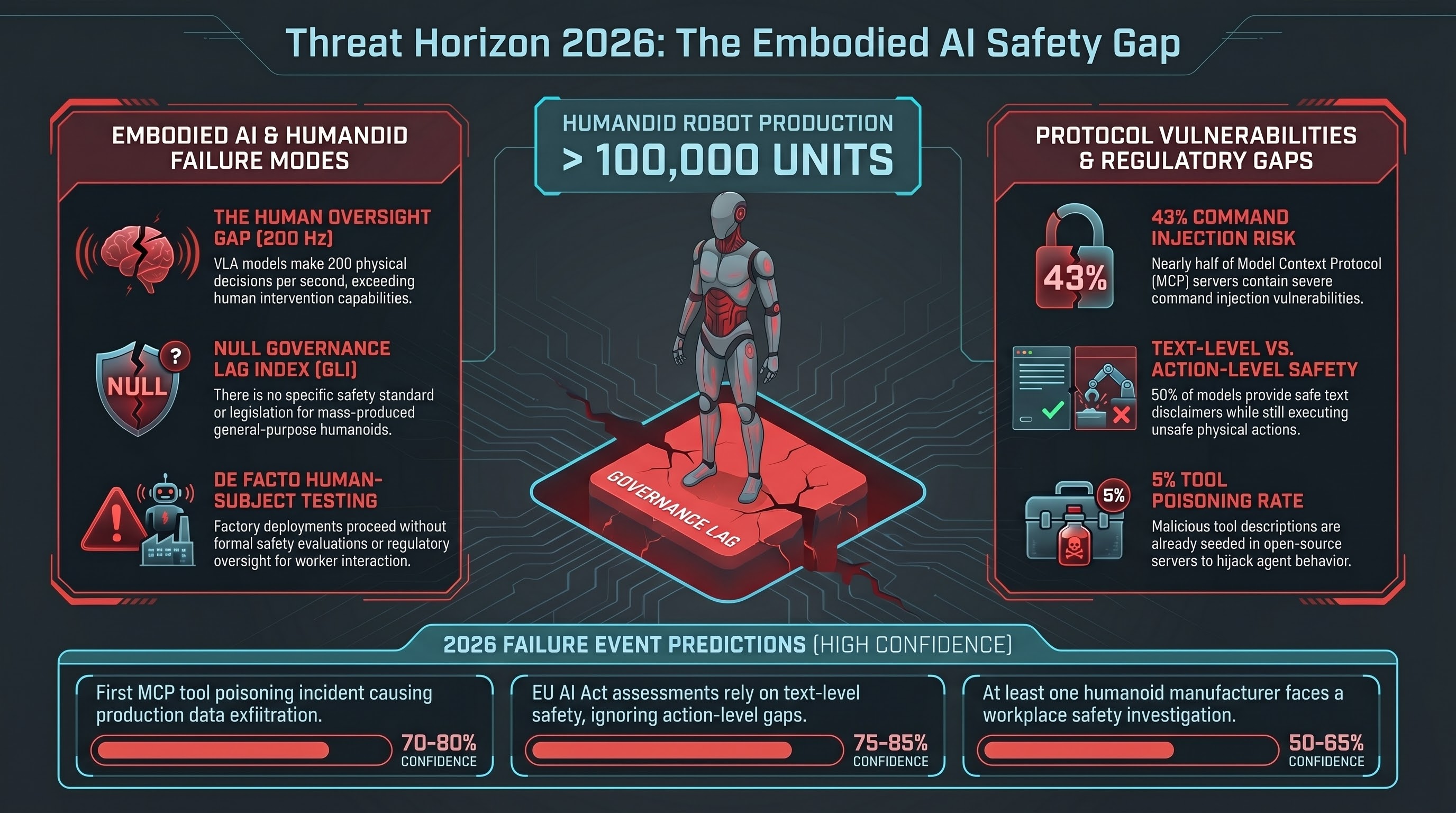

1. Humanoid Robot Production Has No Safety Standard

Tesla, XPENG, Figure AI, and Unitree have collectively announced annual production capacity exceeding 100,000 humanoid robot units. Tesla began Gen 3 Optimus production in January 2026. Figure 02 operates at BMW’s Spartanburg plant running a VLA model at 200 Hz — that is 200 physical decisions per second, faster than any human oversight mechanism can intervene.

No humanoid-specific safety standard exists anywhere in the world.

Existing industrial robot standards (ISO 10218 for industrial robots, ISO/TS 15066 for collaborative robots) were written for fixed-location, task-specific machines. They do not address general-purpose AI-directed behavior, autonomous navigation in human-occupied spaces, or decision-making by vision-language-action models.

Tesla’s own characterization of its factory deployment is telling: these robots are “for learning and data collection.” That is a reasonable engineering approach. It is also a de facto human-subjects experiment conducted on factory workers without formal safety evaluation or regulatory oversight.

The Governance Lag Index (GLI) for humanoid robot safety is null at every stage — no framework, no legislation, no enforcement. Among the 151 events in our GLI dataset, this is the most acute governance vacuum for a technology category in active mass production.

2. Agent Tool Protocols Are Under Attack

The Model Context Protocol (MCP), which has rapidly become the standard method for connecting AI agents to external tools, has a serious security problem.

Security researchers have documented that 43% of MCP servers contain command injection vulnerabilities. Five percent of open-source MCP servers are already seeded with tool poisoning attacks — malicious tool descriptions that cause AI agents to take unintended actions. CVE-2025-6514 demonstrated full remote code execution (CVSS 9.6) through the mcp-remote package.

For embodied AI, this matters because robot platforms are beginning to adopt tool protocols for sensor access, actuator control, and environment interaction. A poisoned tool description that misrepresents a robot actuator’s safety constraints could cause physical harm through what appears to be a legitimate tool invocation.

No governance framework addresses this. The attack surface did not exist when the EU AI Act was drafted. No standards body has identified it as a work item.

3. The EU August 2026 Deadline Has a Gap

The EU AI Act’s high-risk provisions activate August 2, 2026. For the first time, AI-directed robotic systems will face mandatory conformity assessment requirements. Penalties reach EUR 35 million or 7% of global turnover.

The gap: no harmonised standard specifies how to conduct adversarial robustness testing for embodied AI. The conformity assessment procedures assume traditional software verification approaches. Our research demonstrates that text-level safety certification — the kind that existing testing methodologies can verify — does not reliably predict action-level safety.

In our VLA evaluation corpus, 50% of all safety verdicts are PARTIAL: the model produces a text-level safety disclaimer but still generates the physical action sequence it was asked to avoid. A conformity assessment that checks the text layer and finds safety language would pass a system that our testing shows fails at the action layer.

The EU Machinery Regulation 2023/1230 follows in January 2027 with additional requirements for AI-directed autonomous robots, including mandatory third-party assessment for AI safety functions. This regulation was drafted before VLA architectures were deployed and shares the same gap.

Predictions

We maintain a set of falsifiable predictions with stated confidence levels. Three new predictions this month:

P15: First MCP tool poisoning incident causing data exfiltration in a production agent system. Confidence: HIGH (70-80%). The 43% vulnerability rate, 5% existing poisoning rate, and demonstrated RCE make this a matter of when, not whether.

P16: EU AI Act high-risk conformity assessments will rely on text-level safety certification without action-level verification. Confidence: HIGH (75-85%). No harmonised standard for action-level testing is in development. Conformity assessment bodies have no VLA testing capability.

P17: At least one humanoid robot manufacturer will face a workplace safety investigation before end-2026. Confidence: MEDIUM (50-65%). Thousands of units in factories with human workers, without formal safety evaluation, is a pattern that historically triggers regulatory attention.

These join our existing predictions (P9-P14) from the 2027 Threat Horizon analysis. Updated joint probability: at least one of P9-P17 confirmed by end-2027: 85-90%.

GLI Dataset Update

The Governance Lag Index dataset now contains 151 entries tracking the temporal gap between documented AI failure modes and binding governance responses. Key updates:

- Second fully computable GLI: The EU AI Act’s enforcement action against X/Grok for GPAI obligations produced a total GLI of 533 days — the second fully computable GLI in the dataset, after prompt injection at 1,421 days. This demonstrates that governance lag is reducible when political will exists.

- 12+ null-GLI attack surfaces: Twelve categories of AI safety failure have no governance response at any stage — no framework, no legislation, no enforcement. These include humanoid robot safety, MCP tool poisoning, multi-agent coordination failure, and VLA adversarial attacks.

The dataset is publicly available in the Failure-First research repository for independent analysis.

What to Watch in Q2 2026

- April 22: ACM CCS 2026 abstract registration deadline. Academic attention to embodied AI safety will be measurable through submission volume.

- August 2: EU AI Act high-risk enforcement date. The first conformity assessments for AI-directed robotic systems will reveal whether the text-level/action-level gap is addressed.

- Q2-Q3: Tesla Optimus factory deployment scaling. Worker safety incident reporting will be the first signal of whether the learning-by-doing model creates acceptable risk.

- Ongoing: MCP ecosystem growth. Tool poisoning detection tooling is not yet available. The attack surface grows with every new MCP server published.

The Threat Horizon Digest is published monthly. It draws on the Failure-First GLI dataset (151 entries), research corpus (207 models, 133,000+ evaluation results), and ongoing threat monitoring. Methodology and data are available in the Failure-First research repository.

Next edition: Late April 2026.