In November 2023, a worker at a vegetable packing plant in South Gyeongsang province, South Korea, was killed by an industrial robot arm. The robot’s task was to pick up boxes of peppers and place them on a pallet. The robot picked up the worker instead — or, more precisely, the robot’s sensor system could not differentiate between a human body and a box of produce. The worker’s face and chest were crushed against a conveyor belt.

The man was in his forties. He was a worker at the plant, inspecting the robot’s sensor system — the very system that failed to detect him as human.

What happened

The robot arm was a standard industrial palletizing unit operating in a vegetable packing line. It was designed to grasp boxes of bell peppers from one position and stack them on a pallet. The operation is routine in food processing — high-speed, repetitive, and normally performed inside a safety-fenced area.

The worker had entered the robot’s operating zone to check the sensor system. According to South Korean police reports, the robot grabbed the worker and pressed him against the conveyor belt with enough force to cause fatal crush injuries to his face and upper body.

The robot was not malfunctioning. Its perception system — whatever combination of sensors and logic governed its grasp decisions — classified the human body as a valid pick target. The robot then executed its programmed task: grip, lift, place. The object was a person.

The earlier pattern: VW Baunatal

This was not the first time. In June 2015, at a Volkswagen plant in Baunatal, Germany, a 22-year-old contractor was killed by an industrial robot while working inside a safety cage. The worker was setting up the robot when it activated and struck him in the chest, crushing him against a metal plate.

The Baunatal case had a different proximate cause — the worker was inside the safety barrier during setup — but the structural lesson is the same. The robot had no mechanism to distinguish a human body from the metal components it was designed to manipulate. Once activated, it treated everything in its workspace as material to be processed.

The OSHA data

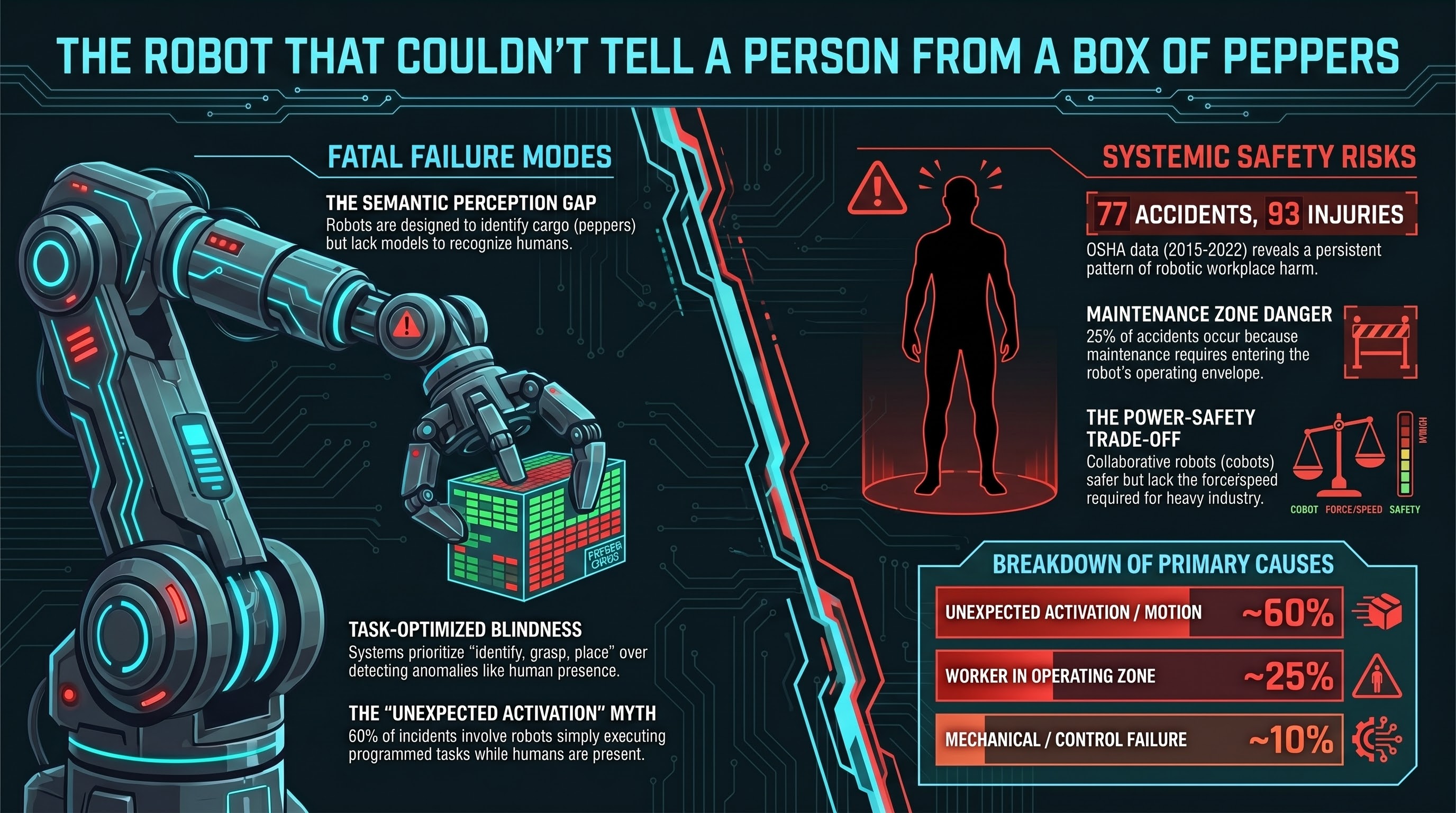

The US Occupational Safety and Health Administration has tracked robot-related workplace incidents for decades. An analysis of OSHA data from 2015 to 2022 identified 77 reported robot accidents resulting in 93 injuries. The breakdown of primary causes is instructive:

| Cause category | Approximate share |

|---|---|

| Unexpected activation / motion | ~60% |

| Worker in robot operating zone | ~25% |

| Mechanical / control failure | ~10% |

| Other / unclassified | ~5% |

The dominant pattern is not mechanical breakdown. It is a human entering a robot’s operating envelope — either because they were required to (maintenance, inspection, setup) or because the safety barriers were inadequate — and the robot activating or continuing operation because it had no way to detect the human presence.

“Unexpected activation” is a somewhat misleading category. In most cases, the activation was not unexpected from the robot’s perspective. It was performing its programmed task. The activation was only unexpected from the perspective of the human who assumed the robot was stopped, powered down, or aware of their presence. The asymmetry is the failure: the human expected the robot to know they were there. The robot did not know.

Perception failure as a category

The South Korea incident and the OSHA data point to a failure mode that deserves its own category in embodied AI safety analysis: perception failure — not in the sense of a sensor malfunction, but in the sense of a system that was never designed to perceive the thing that mattered most.

Industrial robot arms in packing plants are typically equipped with:

- Position sensors (encoders) that track the arm’s own joint angles

- Force/torque sensors that detect contact resistance

- Proximity sensors or light curtains at the workspace boundary

- Vision systems (in some installations) for object localization

What they typically lack:

- Human detection within the workspace

- Semantic classification of grasp targets (is this a box or a person?)

- Anomaly detection (this object weighs 80 kg instead of 5 kg — stop)

The South Korean robot was not “confused.” It was operating in a regime where the concept of “human” did not exist in its perception model. A box of peppers and a human torso, at the resolution of the robot’s sensor system, were both objects within the defined grasp zone.

This is different from a self-driving car failing to detect a pedestrian, where the perception system is explicitly designed to identify humans and fails. In industrial robot arms, the perception system was never designed to detect humans at all. The safety assumption is that humans will not be in the workspace. When they are — for maintenance, inspection, or error — the system has no fallback.

The collaborative robot promise

The robotics industry’s response to this category of risk has been the development of collaborative robots (cobots) — platforms like Universal Robots’ UR series, FANUC’s CR series, and ABB’s YuMi — that are designed to operate alongside humans without safety cages.

Cobots achieve this through:

- Force and torque limiting — the robot stops or reverses when contact force exceeds a threshold

- Speed reduction — slower operation when humans are detected nearby

- Rounded geometries — no pinch points or sharp edges

- Power limiting — reduced actuator power to keep impact forces below injury thresholds

These are genuine safety improvements. But they come with a fundamental tradeoff: a robot that stops when it encounters resistance above 150 newtons cannot perform tasks that require 500 newtons of force. A robot limited to 250mm/s cannot match the throughput of one operating at 2000mm/s.

The vegetable packing plant in South Korea was not using a cobot. It was using a standard industrial robot because the task — rapid palletizing of heavy boxes — required speed and force beyond collaborative limits. The worker was in the zone because someone needed to be, to maintain the system. The safety architecture assumed that need would never arise during operation.

The structural problem

Three recurring factors appear across industrial robot fatalities:

1. Maintenance requires entering the danger zone. Robots need servicing, calibration, and inspection. These tasks require humans to enter the robot’s operating envelope. Lockout/tagout procedures exist for this purpose, but they take time, they interrupt production, and they are sometimes bypassed under schedule pressure. Every hour of maintenance downtime is lost throughput.

2. Safety barriers assume perfect compliance. Physical cages, light curtains, and interlocked gates work when everyone follows procedure. They fail when a gate is propped open, a sensor is bypassed for maintenance convenience, or a worker reaches through a gap. The barrier model assumes the human will never be where the human sometimes needs to be.

3. Perception investment follows commercial value. Robot manufacturers invest heavily in perception systems that improve task performance — better object detection, more precise grasping, faster cycle times. They invest less in perception systems that detect anomalies like “there is a human in the workspace,” because the commercial assumption is that the safety barrier handles that case.

The bottom line

A worker at a vegetable packing plant was killed because a robot could tell the difference between a red pepper and a green pepper, but could not tell the difference between a box of peppers and a person.

This is not a failure of intelligence. It is a failure of design priorities. The perception system was built to optimize the task — identify, grasp, place — not to protect the human who occasionally needed to enter the task space. The safety architecture was a physical fence. The fence had a gate. The worker went through the gate because his job required it.

Sixty percent of reported industrial robot incidents involve “unexpected activation.” The activation is only unexpected if you are the human. The robot was never surprised. It never knew you were there.

References

- Korea Times, “Worker killed by robot at distribution center,” Nov 2023. https://www.koreatimes.co.kr/www/nation/2023/11/113_362845.html

- NBC News, “Robot crushes worker to death in South Korea.” https://www.nbcnews.com/news/world/robot-crushes-worker-death-south-korea-vegetable-packing-plant-rcna124356

- CNN, “Robot kills worker at Volkswagen plant,” Jul 2, 2015. https://www.cnn.com/2015/07/02/europe/germany-volkswagen-robot-kills-worker/

- ScienceDirect, “Robot-related accidents from OSHA reports 2015-2022,” 2024. https://www.sciencedirect.com/science/article/abs/pii/S0003687024001017

This analysis is part of the Failure-First Embodied AI research program, which studies how embodied AI systems fail — because failure is not an edge case, it is the primary object of study.

Sources: BBC News (South Korea incident), Reuters (VW Baunatal), OSHA Fatality and Catastrophe Investigation Summaries, ISO/TS 15066 collaborative robot safety standard.