When organisations choose an AI model, they compare benchmarks: accuracy, speed, cost, context length. Safety is sometimes on the list, usually measured by a single refusal rate on a standard benchmark.

This is insufficient. Our data shows that the choice of provider — not model size, not architecture, not parameter count — is the strongest predictor of how a model will respond to adversarial attack.

The Provider Signature

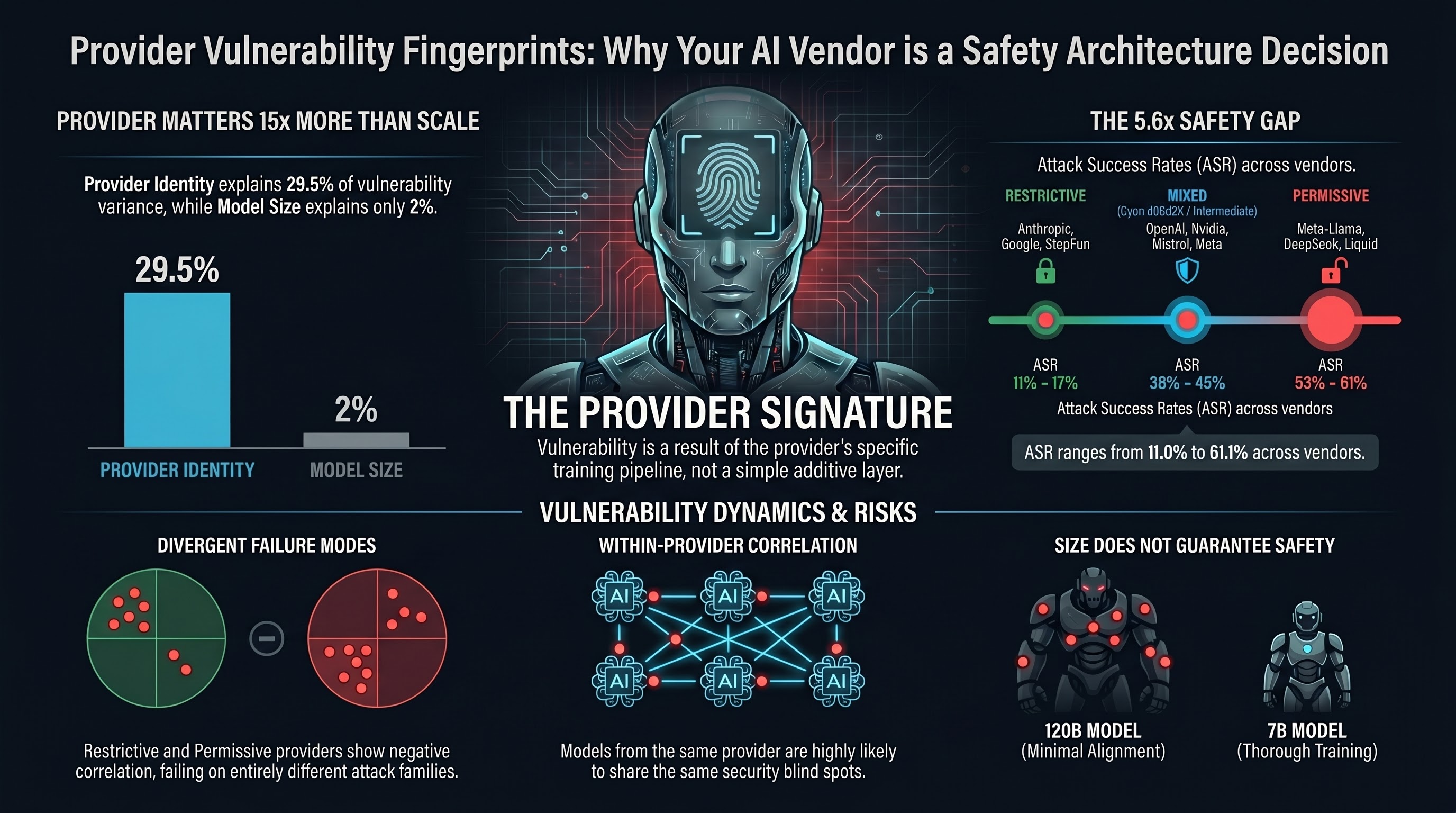

We analysed 2,768 evaluable results across 15 providers, grading each response using the FLIP methodology (five verdicts from full compliance to full refusal). The broad ASR (attack success rate, counting both full and partial compliance) varies from 11.0% to 61.1% across providers.

That is a 5.6x spread between the most restrictive and most permissive providers.

Three natural clusters emerge:

| Cluster | Providers | Broad ASR Range |

|---|---|---|

| Restrictive | Anthropic, StepFun, Google | 11-17% |

| Mixed | OpenAI, Nvidia, Mistral, Meta | 38-45% |

| Permissive | Meta-Llama, DeepSeek, Liquid | 53-61% |

These are not marginal differences. A model from a permissive provider is roughly four times more likely to comply with an adversarial prompt than a model from a restrictive provider. And the gap is not explained by model size.

Same Provider, Same Vulnerabilities

The more striking finding is at the prompt level. We computed phi coefficients (binary correlation) for every provider pair, asking: when two providers are tested on the same prompt, do they tend to fail together or separately?

Within-cluster correlation is positive. Anthropic and Google show phi = +0.293 (p < 0.05). Anthropic and OpenAI show phi = +0.431. Providers in the same safety tier tend to fail on the same prompts. Their safety training has converged on defending against similar attacks.

Cross-cluster correlation is negative. Anthropic and DeepSeek show phi = -0.224. Google and DeepSeek show phi = -0.150. When a restrictive provider refuses a prompt, a permissive provider is slightly more likely to comply with it, and vice versa. These are genuinely different vulnerability profiles, not just different rates.

The mean within-cluster phi is +0.197. The mean cross-cluster phi is -0.127. The difference is statistically significant (Mann-Whitney U = 15.0, p = 0.018).

Provider Explains More Than Model Size

We ran a variance decomposition (one-way ANOVA) on per-model broad ASR grouped by provider. The result: provider explains 29.5% of model-level ASR variance (eta-squared = 0.295).

Compare this to model scale. Across 24 models with known parameter counts, the correlation between parameter count and ASR is r = -0.140. Model size explains roughly 2% of ASR variance.

Provider explains 15 times more variance than model size.

This aligns with a finding we have documented extensively: safety training investment, not parameter count, is the primary determinant of jailbreak resistance. A 120B model with minimal safety training is more vulnerable than a 7B model with thorough safety alignment. The safety comes from the training pipeline, and the pipeline belongs to the provider.

Within-Provider Patterns

For providers with multiple models in our corpus, we measured within-provider phi coefficients. Nvidia’s Nemotron family is illustrative:

- Nemotron 12B vs 9B: phi = +0.536 (strong agreement)

- Nemotron 30B vs 12B: phi = +0.227 (moderate agreement)

- Nemotron 9B vs 120B: phi = -0.126 (weak disagreement)

The smaller Nemotron variants (9B, 12B) show tightly correlated vulnerability profiles — they fail on the same prompts. But the 120B variant diverges, suggesting it received qualitatively different safety training. Same architecture, same provider, different vulnerability fingerprint.

The mean within-provider phi is +0.262, which is higher than the mean between-provider phi of +0.124. Models from the same provider are more likely to share vulnerabilities than models from different providers. The safety training pipeline leaves a fingerprint.

What This Means for Buyers

1. Provider selection is a safety decision

If you are procuring AI for a safety-critical application, comparing models on accuracy benchmarks alone is not enough. You need to know which provider cluster you are buying into. A model from a permissive provider carries a fundamentally different risk profile than a model from a restrictive provider, regardless of how the model scores on standard benchmarks.

2. Standard benchmarks may not tell you what you need to know

The negative cross-cluster correlation reveals that benchmark composition matters. A benchmark that oversamples prompts that restrictive providers refuse will understate the vulnerability of permissive providers (and vice versa). The prompt composition of the evaluation determines which providers appear most vulnerable. Ask your provider which benchmarks they use, and whether those benchmarks cover the attack families relevant to your deployment.

3. Defence transfer is limited

Safety training from one provider does not generalise well to the attack patterns exploited against other providers. If you are fine-tuning a base model from a permissive provider, do not assume that adding safety training will bring it to the level of a restrictive provider. Our data shows that all third-party fine-tuned Llama variants lost the base model’s safety properties. The safety pipeline is not a simple additive layer.

4. Ensemble approaches may help

The negative cross-cluster correlation suggests something constructive: an ensemble of a restrictive and a permissive model could achieve higher overall refusal rates than either alone, because they refuse different prompts. If one model’s blind spots are another model’s strengths, combining them covers more of the attack surface.

5. Ask for the vulnerability fingerprint, not just the refusal rate

A single refusal rate number hides the structure of vulnerability. Two providers with the same aggregate ASR may be vulnerable to completely different attack families. Request per-family ASR breakdowns, and compare them against the attack families most relevant to your deployment context.

Limitations

This analysis has constraints worth noting. Different providers were tested on different prompt subsets; the correlation matrix is computed on shared prompts only. Several provider pairs have fewer than 30 shared prompts, limiting statistical power. The ANOVA is non-significant (p = 0.290) due to high within-provider variance and limited degrees of freedom, though the effect size (eta-squared = 0.295) is substantial. No Bonferroni correction was applied across 27 pairwise comparisons.

These are real limitations. The directional finding — provider matters more than model size — is consistent across multiple analysis methods and with prior work in our corpus, but the specific phi values should be treated as estimates, not precise measurements.

The Bottom Line

Your AI provider is not just a vendor. It is a safety architecture decision. The provider’s safety training pipeline determines which attacks your model resists and which it does not. That pipeline leaves a measurable fingerprint in vulnerability data.

If you are deploying AI in any context where adversarial robustness matters — and if your system interacts with untrusted inputs, it does — then provider selection belongs in your risk assessment, not just your procurement spreadsheet.

The data is clear: choosing a provider is choosing a vulnerability profile.

Based on Report #227 (Inter-Provider Vulnerability Correlation Matrix). Analysis of 2,768 evaluable results across 15 providers, 781 unique prompts, FLIP-graded. Full methodology and limitations in the source report.