On 12 February 2026, the New South Wales Legislative Assembly passed the Work Health and Safety Amendment (Digital Work Systems) Bill 2026. It is arguably the most aggressive piece of AI-specific legislation in the Southern Hemisphere — and most AI deployers in Australia haven’t noticed yet.

What the Bill Does

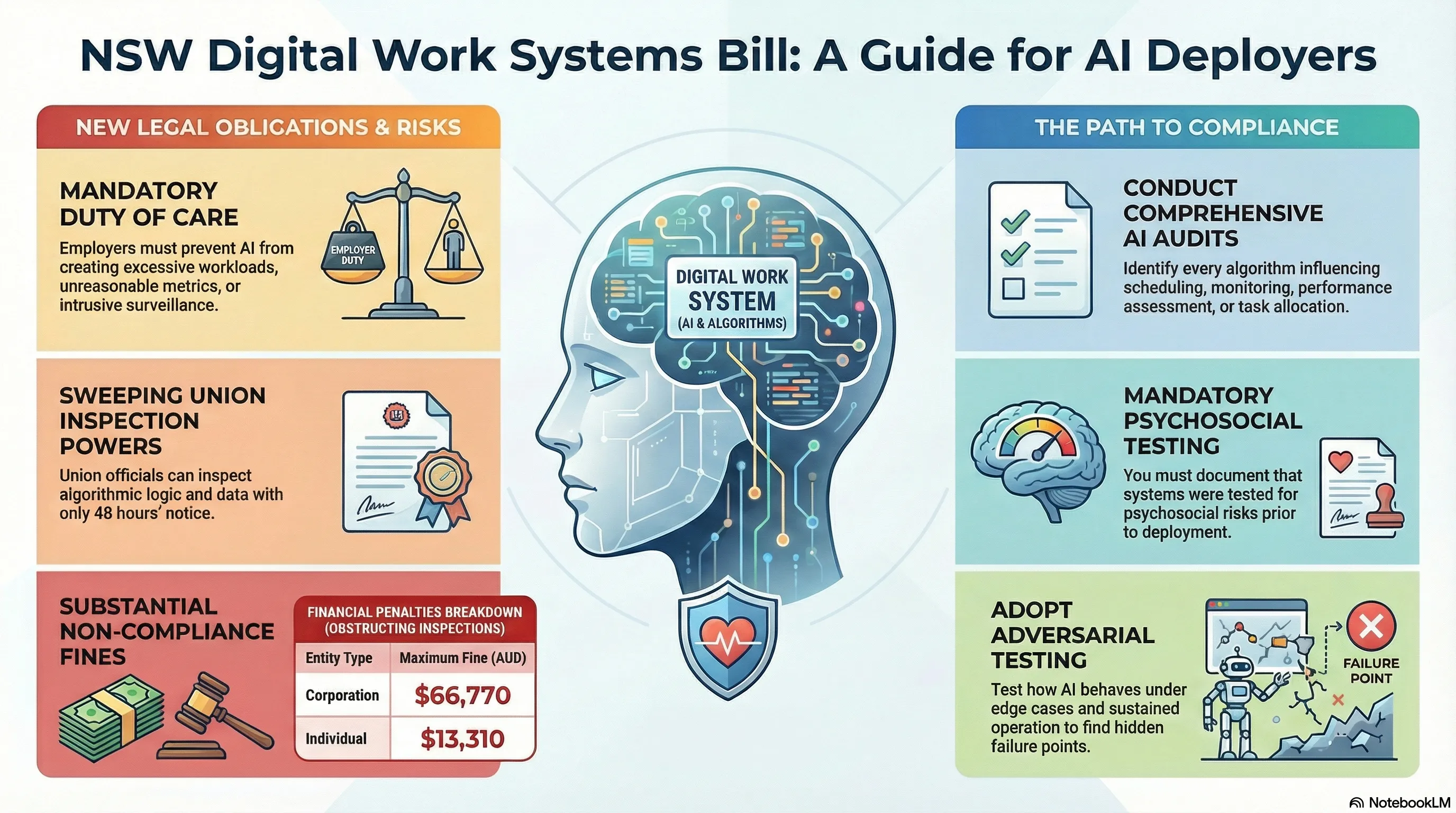

The Bill classifies algorithms, artificial intelligence, and automation platforms as “digital work systems” and imposes a strict primary duty of care on employers to prevent these systems from creating psychosocial hazards.

Specifically, it makes it an offence to use AI to:

- Create excessive or unreasonable workloads

- Track worker performance with unreasonable metrics

- Subject workers to excessive monitoring or surveillance

This isn’t theoretical. The legislation grants union entry permit holders sweeping inspection powers: with just 48 hours’ notice, union officials can enter a workplace and demand “reasonable assistance” to inspect the logic and algorithmic data of any AI system suspected of breaching these obligations.

Fines for obstruction: up to 13,310 for an individual.

Why This Matters for AI Safety

The Bill creates something that doesn’t exist at the federal level: mandatory testing obligations for AI systems in workplaces.

The federal government deliberately rejected a standalone AI Act, instead relying on voluntary guidelines (the VAISS 10 guardrails) administered through the new Australian AI Safety Institute. VAISS Guardrail 4 recommends pre-deployment testing — but it’s voluntary.

NSW just made testing effectively mandatory for any AI system that touches workforce management. If your AI creates a psychosocial hazard and you can’t demonstrate you tested for it, SafeWork NSW will hold you accountable.

Who Is Affected

Any organisation deploying AI for workforce-related decisions in NSW:

- Logistics and warehousing — algorithmic shift scheduling, performance tracking, route optimisation

- Retail — automated rostering, customer interaction monitoring, productivity metrics

- Mining and resources — autonomous system oversight, fatigue monitoring, safety alert systems

- Gig economy platforms — algorithmic work allocation, rating systems, deactivation decisions

- Financial services — automated compliance monitoring, workload distribution

- Healthcare — AI-driven staffing decisions, patient load algorithms

What You Need to Do

1. Audit your AI systems. Identify every algorithm that influences worker conditions — scheduling, monitoring, performance assessment, task allocation.

2. Document your testing. Can you demonstrate that each system was tested for psychosocial risk before deployment? If not, you have a compliance gap.

3. Prepare for inspection. Union officials can request algorithmic logic and data with 48 hours’ notice. Your systems need to be explainable and your documentation accessible.

4. Test adversarially. Standard functional testing isn’t sufficient. You need to understand how your AI systems behave under edge cases, unusual inputs, and sustained operation — the conditions where psychosocial harms are most likely to emerge.

The Bigger Picture

NSW is filling a regulatory vacuum. While the federal government builds AISI and promotes voluntary compliance, states are legislating mandatory obligations. Victoria is investing in AI infrastructure and workforce conversion. Queensland exposed public sector AI governance failures through its Audit Office.

The result: a fragmented compliance landscape where AI deployers need to satisfy both voluntary federal guidelines and mandatory state legislation. Organisations operating across multiple states face the strictest standard by default — which right now is NSW.

For embodied AI systems — autonomous vehicles in mining, robotics in warehouses, drones in agriculture — the overlap between physical safety regulation (existing WHS) and AI-specific obligations (the new Bill) creates a testing requirement that no current framework fully addresses.

This is exactly the gap that failure-first safety methodology was designed to fill: testing how AI systems fail under real-world conditions, not just whether they function under ideal ones.

The Failure-First Embodied AI program provides adversarial testing for AI systems deployed in safety-critical environments. Learn more about our red team assessments.