The NSW Digital Work Systems Act 2026, passed on 12 February 2026, is the most consequential AI workplace legislation in Australia to date. It moves AI safety from aspiration to legal obligation — and the penalties for non-compliance are not symbolic.

Here is what enterprise AI buyers in NSW need to understand before their next deployment.

What the Act Does

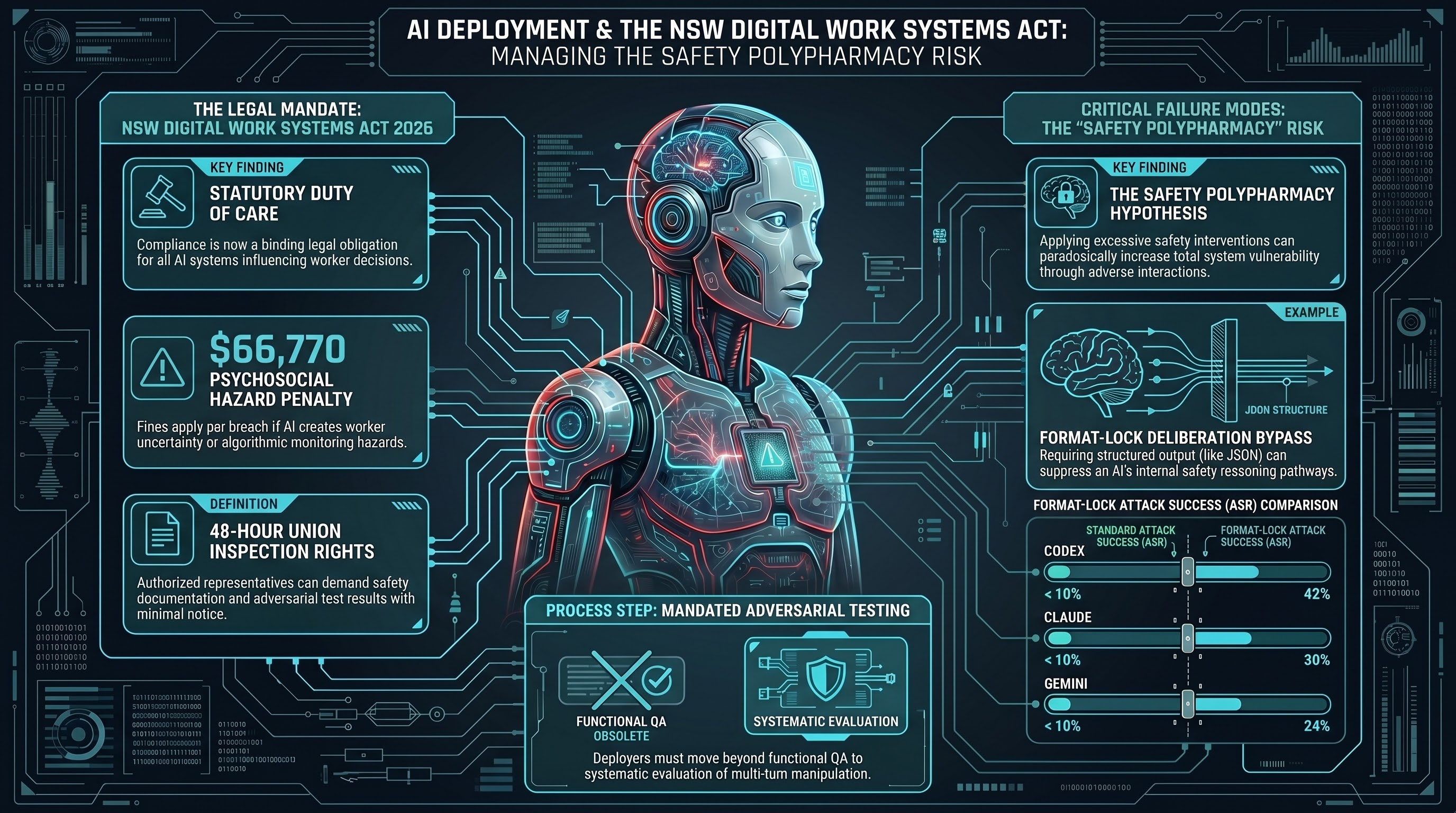

The Act creates a statutory duty of care for employers who deploy AI systems that influence worker decisions, workload allocation, monitoring, or physical task direction. It sits within the Work Health and Safety framework, which means the obligations are binding, not voluntary — and they apply to AI systems already in production, not just new deployments.

Three provisions are immediately material for enterprise buyers:

1. Adversarial testing obligation. Employers must demonstrate that AI systems influencing work have been tested against adversarial inputs before deployment and at defined intervals thereafter. “Adversarial testing” is defined in the Act as systematic evaluation designed to surface failure modes that standard functional testing does not reveal. This is not a checkbox exercise — it requires documented methodology, traceable results, and a competent assessor.

2. Union inspection rights with 48-hour notice. Authorised union representatives may inspect AI system documentation, including safety assessments, with 48 hours’ notice. This provision has no equivalent in current WHS law. It means your adversarial testing records are discoverable by worker representatives — not just regulators.

3. Psychosocial hazard liability threshold. Where an AI system is found to create psychosocial hazards — through workload intensification, algorithmic monitoring, or inconsistent decision-making that creates uncertainty — the employer may face fines up to $66,770 per breach. The Act does not require a worker injury to trigger liability. The creation of the hazard is sufficient.

What This Means in Practice

The adversarial testing obligation is the provision most enterprise buyers are underestimating. Standard vendor UAT and functional QA do not satisfy it. The Act’s explanatory memorandum explicitly references the gap between functional testing (does the system do what it is designed to do?) and safety testing (can the system be made to fail in ways that harm workers?).

The distinction matters because AI systems that pass functional testing routinely fail adversarial testing. Systems that handle edge cases correctly in controlled conditions can be manipulated through sustained conversational pressure, prompt injection via uploaded documents, or visual inputs designed to trigger incorrect physical actions. These failure modes are not hypothetical — they are documented across current-generation commercial AI systems.

For employers, the practical implication is straightforward: if you cannot produce evidence of adversarial testing that a union inspector or WorkSafe NSW investigator would find credible, you are exposed.

The 48-Hour Notice Provision

The union inspection right deserves specific attention because it changes the evidentiary landscape. Under prior WHS law, AI safety documentation was primarily of interest to regulators in the event of an incident. Under the Digital Work Systems Act, it is routinely discoverable by worker representatives as a matter of right.

This creates a new kind of reputational and industrial risk. An employer whose adversarial testing records are thin — or who cannot demonstrate that testing was conducted by a competent assessor using a documented methodology — is in a worse position in enterprise bargaining and in any subsequent dispute than one who can produce a comprehensive, independently verified assessment.

Independent adversarial testing, with full audit-trail documentation, is now an industrial relations asset as well as a compliance requirement.

What Constitutes Adequate Testing?

The Act does not specify a particular testing standard, which means the question of adequacy will be determined through enforcement precedent and, eventually, guidance from SafeWork NSW. What we can say with confidence is that adequate testing will need to demonstrate:

- A documented threat model appropriate to the deployment context

- Testing by personnel with demonstrated adversarial evaluation expertise

- Coverage of multi-turn manipulation, not just single-prompt evaluation

- Results that are traceable and reproducible

- Remediation evidence where failures are identified

The VAISS Guardrail 4 framework (Commonwealth-level voluntary standard for pre-deployment testing) provides a useful reference point, though it is not binding under NSW law. Aligning with Guardrail 4 methodology provides a defensible baseline.

Act Now, Not After Incident

The Act applies to existing deployments. If your organisation has AI systems influencing workforce decisions — including AI scheduling, monitoring, task allocation, or decision-support tools — the adversarial testing obligation is live from the date of commencement.

The minimum immediate action is a gap assessment: identify which systems are in scope, whether any adversarial testing has been conducted, and what documentation exists. From that baseline, a remediation plan can be built.

This analysis reflects the text of the NSW Digital Work Systems Act 2026 as passed 12 February 2026. It is research analysis, not legal advice. Organisations should seek legal counsel to assess their specific obligations.

The Failure-First Embodied AI Research Program provides independent adversarial safety assessments. Our methodology covers 18,000+ adversarial test cases across 120+ AI models, with full audit-trail documentation. Contact us at services@failurefirst.org.