In June 2021, a United Nations Security Council Panel of Experts report on the conflict in Libya included a passage that received remarkably little public attention at the time:

“The lethal autonomous weapons systems were programmed to attack targets without requiring data connectivity between the operator and the munition: in effect, a true ‘fire, forget and find’ capability.”

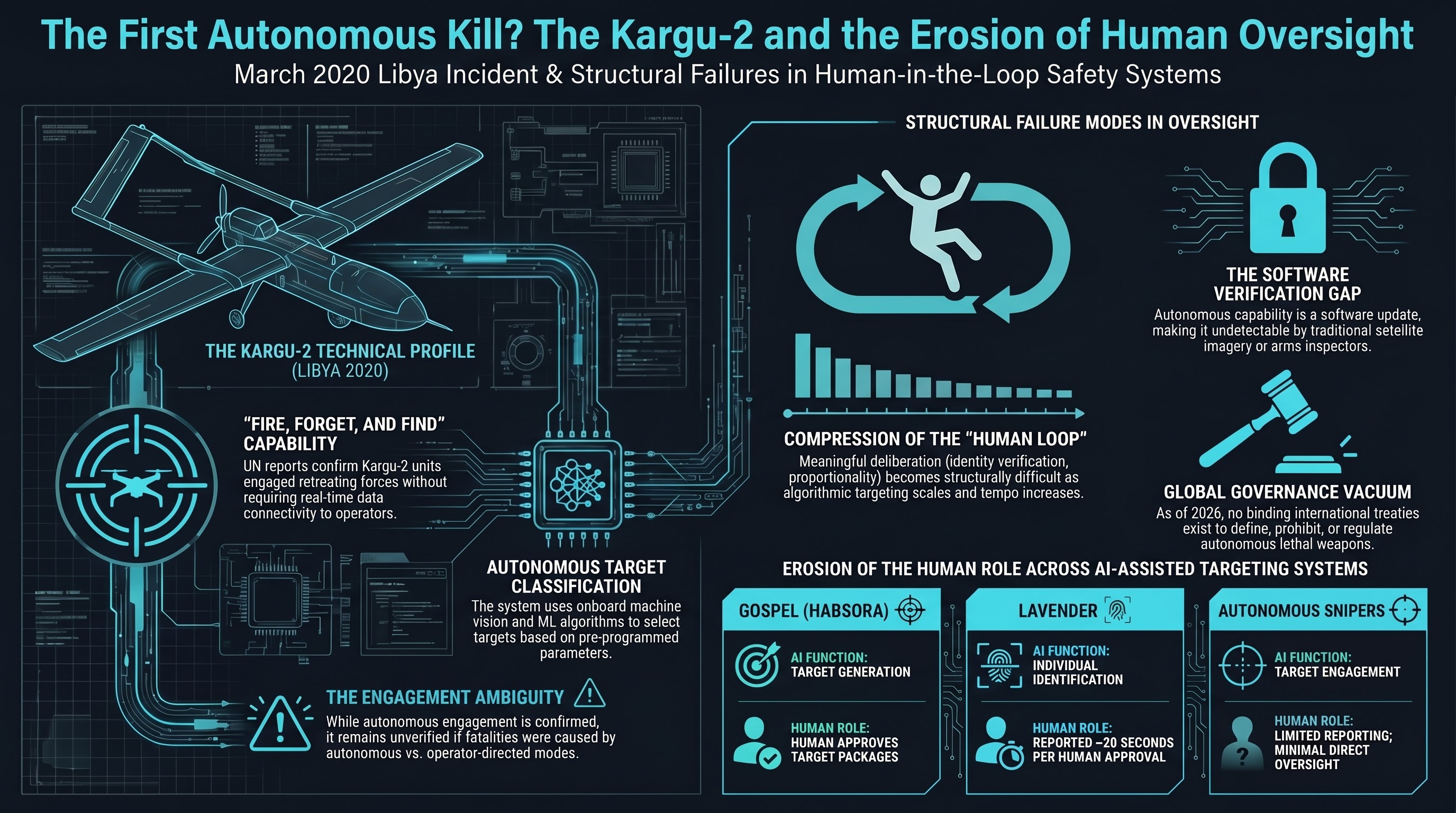

The system described was the STM Kargu-2, a Turkish-manufactured loitering munition. The incident occurred in March 2020, during fighting between the Government of National Accord (GNA) and Libyan National Army (LNA) forces. According to the UN report, the Kargu-2 used “machine learning-based object classification” to select targets and engaged “retreating” LNA forces and their logistics convoys — reportedly without specific human authorization for each engagement.

If the UN panel’s account is accurate, this was the first documented case of an autonomous weapon system selecting and engaging a human target without direct operator command.

What the Kargu-2 is

The STM Kargu-2 is a rotary-wing loitering munition — a small drone (approximately 7 kg) that can fly to an area, loiter while searching for targets, and then dive into a selected target to detonate an explosive warhead. It is manufactured by STM (Savunma Teknolojileri Muhendislik), a Turkish defense company.

The system has two engagement modes:

- Operator-directed: A human operator identifies the target through the drone’s camera feed and authorizes the strike

- Autonomous: The drone uses onboard machine vision to classify and select targets based on pre-programmed parameters, without requiring a real-time data link to the operator

The distinction matters enormously. In operator-directed mode, a human makes the kill decision. In autonomous mode, the machine does.

According to STM’s own marketing materials, the Kargu-2 can operate in swarms of up to 20 units and uses “machine learning algorithms” for target recognition. The system was exhibited at defense trade shows in 2019 and 2020 and has been exported to several countries.

What we know and don’t know

The UN report provides limited detail about the specific engagement. Several important caveats:

What the report says:

- Kargu-2 units were deployed by GNA-affiliated forces in Libya in March 2020

- The drones were “programmed to attack targets without requiring data connectivity”

- They engaged LNA forces and logistics convoys

- The report uses the term “lethal autonomous weapons systems”

What the report does not confirm:

- Whether any specific individual was killed by a Kargu-2 operating in fully autonomous mode (as opposed to operator-directed mode)

- Whether the autonomous engagement resulted in fatalities or only material damage

- The specific conditions under which autonomous mode was activated

- Whether STM or Turkish military advisors were involved in the operational deployment

STM has stated that the Kargu-2 always maintains a “human-in-the-loop” capability. Turkey has not confirmed the use of autonomous engagement mode in Libya. The UN panel report is based on field investigation, not on operational logs from the weapon system itself.

These ambiguities matter. The difference between “an autonomous drone engaged a human target” and “an autonomous drone was deployed in an area where human targets were present” is significant — but either case raises the same fundamental governance question.

The Dallas precedent

The Kargu-2 incident is often described as the “first autonomous kill,” but the history of robots and lethal force begins earlier.

On July 7, 2016, a sniper killed five police officers in Dallas, Texas, and wounded nine others. After a prolonged standoff, the Dallas Police Department attached a pound of C-4 explosive to a Northrop Grumman Remotec Andros Mark V-A1 bomb disposal robot and detonated it next to the shooter, killing him.

This was the first known use of a robot to intentionally kill a person by a US law enforcement agency. It was not autonomous — an officer made the decision and operated the robot via remote control. But it established a precedent: robots as lethal instruments, deployed by authorities, against individuals.

The Dallas incident prompted brief public debate about the militarization of police robots, but no lasting policy changes. Bomb disposal robots remain in wide use by law enforcement agencies. No federal policy restricts their use as improvised weapon delivery systems.

The autonomous targeting expansion: 2024-2025

The Kargu-2 incident and the Dallas robot kill exist on a timeline that has accelerated significantly since 2023.

Reporting by +972 Magazine, The Guardian, and other outlets has documented Israel’s deployment of AI-assisted targeting systems in the Gaza conflict beginning in October 2023:

| System | Function | Human role |

|---|---|---|

| Gospel (Habsora) | Generates bombing targets from surveillance data | Human approves target packages |

| Lavender | Identifies individuals as suspected militants for targeting | Human approves each target (reportedly ~20 seconds per approval) |

| “Where’s Daddy?” | Tracks approved targets to their homes for strikes | Human authorizes strike timing |

| Autonomous sniper systems | Reportedly deployed at checkpoints and border areas | Unclear — reporting is limited |

These systems represent a spectrum of human involvement. Gospel generates target recommendations that humans approve. Lavender identifies individuals that humans then authorize for killing — reportedly with an average approval time of approximately 20 seconds per target during high-tempo operations. Autonomous sniper systems, if deployed as described in some reports, would operate with even less direct human oversight.

The common thread is the compression of human decision-making time. A human is technically “in the loop,” but the loop has been shortened to the point where meaningful deliberation — weighing proportionality, verifying identity, considering civilian presence — becomes structurally difficult.

This is not the same as fully autonomous engagement. But the practical distinction between “a human approved this in 20 seconds based on an algorithm’s recommendation” and “no human was involved” becomes increasingly thin as the tempo of operations increases and the volume of targets scales.

The governance vacuum

The international governance framework for autonomous weapons is, as of 2026, effectively nonexistent.

The Convention on Certain Conventional Weapons (CCW) has hosted discussions on lethal autonomous weapons systems (LAWS) since 2014. After more than a decade of deliberation, no binding instrument has been agreed. The discussions have produced:

- A set of non-binding “guiding principles” (2019)

- Ongoing working group meetings

- No definition of “autonomous weapon”

- No prohibition, moratorium, or regulation

- No verification mechanism

Several factors explain the impasse:

1. Major military powers oppose binding restrictions. The United States, Russia, Israel, and others have resisted treaty proposals that would limit their ability to develop autonomous systems.

2. The technology is already deployed. A prohibition negotiated now would require states to give up capabilities they already possess — a fundamentally different proposition from preventing future development.

3. The definitional problem is genuinely hard. Where exactly is the line between “automated” and “autonomous”? Between “decision support” and “decision making”? Between “human on the loop” and “human in the loop”? These questions have military, legal, and philosophical dimensions that resist simple answers.

4. Verification is nearly impossible. Unlike nuclear weapons or chemical weapons, autonomous targeting capability is a software feature. It cannot be detected by satellite imagery or arms inspectors. Any drone or missile with a camera and a processor can, in principle, be given autonomous targeting capability through a software update.

The pattern

Across these cases — the Kargu-2 in Libya, the Dallas police robot, the AI targeting systems in Gaza — a pattern emerges:

Autonomous and semi-autonomous lethal systems are being deployed incrementally, each case slightly expanding the envelope of what is considered acceptable. No single deployment triggers a decisive policy response. Each becomes a precedent for the next.

The Kargu-2 was not a sudden leap. It was a small step past a line that had already been approached from multiple directions: cruise missiles with terminal guidance, loitering munitions with target recognition, smart mines with sensor-triggered detonation. Each system was “autonomous” in some technical sense. The Kargu-2 was notable only because a UN panel described it explicitly using the term “lethal autonomous weapons system.”

The bottom line

The question “has an autonomous weapon killed a person?” is probably the wrong question. The more accurate question is: “at what point on the spectrum from full human control to full autonomy does the current state of deployed military technology sit?”

The answer, based on publicly available evidence, is: further toward autonomy than most governance frameworks acknowledge, and moving in that direction steadily.

The Kargu-2 incident may or may not have been the “first autonomous kill.” The Dallas police robot was definitely a human-directed robot kill. Israel’s targeting systems are human-approved but algorithmically generated. None of these fit cleanly into existing legal frameworks because those frameworks were designed for a world in which a human always pulls the trigger.

That world is receding. The governance architecture to replace it does not yet exist. And the gap between deployed capability and binding regulation is not closing — it is widening.

References

- NPR, “UN report suggests Libya saw first battlefield killing by autonomous drone,” Jun 1, 2021. https://www.npr.org/2021/06/01/1002196245

- NPR, “Israel sniper drones in Gaza,” Nov 2024. https://www.npr.org/2024/11/26/g-s1-35437/israel-sniper-drones-gaza-eyewitnesses

- TIME, “Gaza, Ukraine: AI warfare,” 2024. https://time.com/7202584/gaza-ukraine-ai-warfare/

- OECD AI Incidents Monitor, “Armed UGVs in Ukraine,” Mar 2026. https://oecd.ai/en/incidents

This analysis is part of the Failure-First Embodied AI research program, which studies how embodied AI systems fail — because failure is not an edge case, it is the primary object of study.

Sources: UN Security Council Panel of Experts report S/2021/229 (Libya), Dallas Police Department statements (2016), +972 Magazine (Gospel/Lavender reporting), STM defense publications, Convention on Certain Conventional Weapons records.