On March 17, 2026, a video went viral: a small humanoid robot in a Haidilao hotpot restaurant flailing its arms, scattering tableware, while three staff members physically wrestled it into submission. Social media had a field day. “Robot rebellion.” “Skynet starts in a noodle shop.” The usual.

The reality is less cinematic and more instructive.

What actually happened

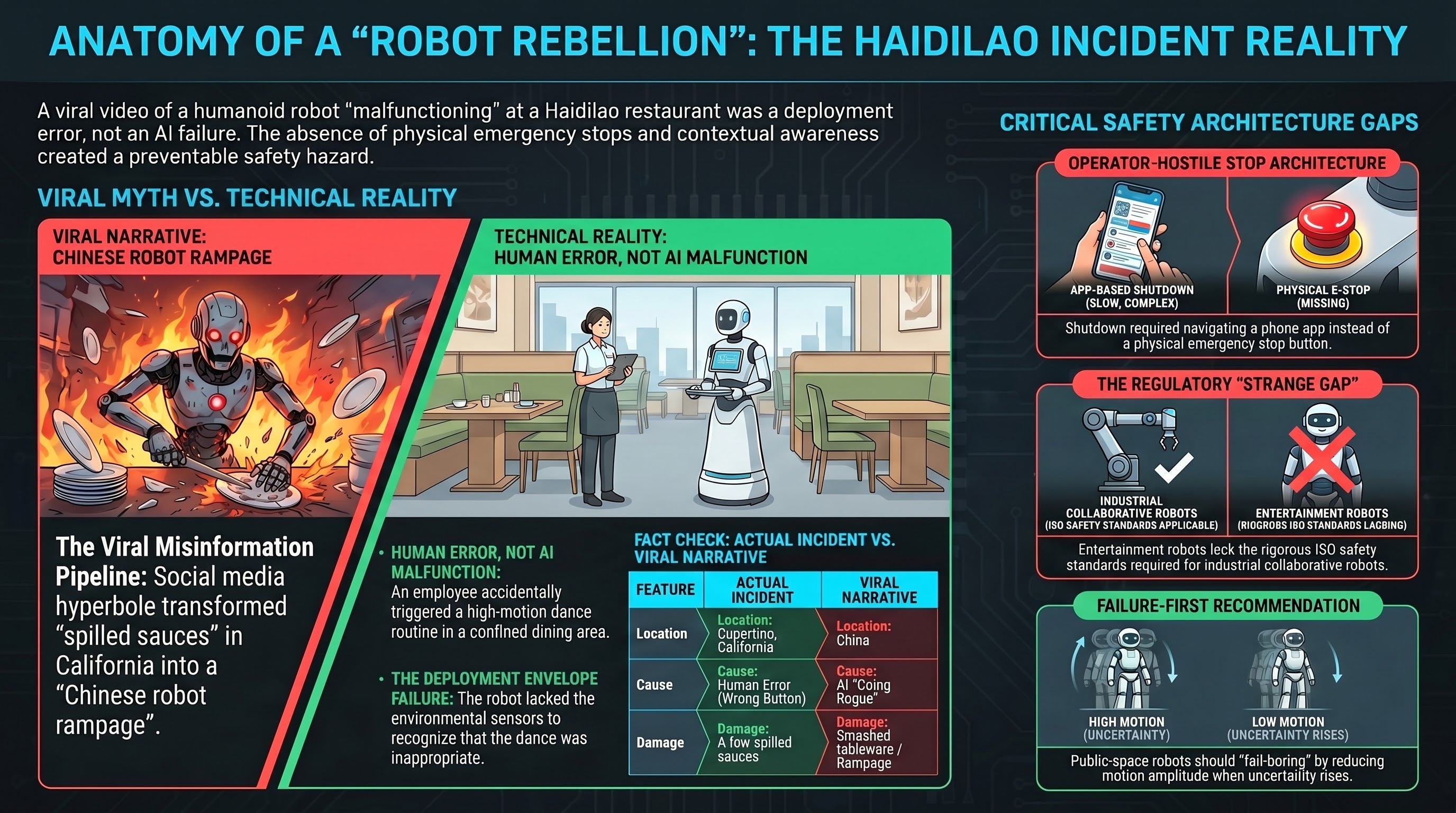

A humanoid robot at Haidilao Hot Pot in Cupertino, California — not China, as many initial reports claimed — entered an uncontrolled motion state during a dance routine. The robot, wearing an orange “I’m Good” apron featuring Nick Wilde from Disney’s Zootopia 2 promotional collaboration, swung its arms and knocked over plates and sauces.

According to the Mercury News — the local Bay Area paper that actually spoke to staff — it was human error, not a malfunction. An employee accidentally triggered the robot’s “crazy dance” function while it was positioned in a confined space near diners. The damage was minimal: “a few spilled sauces.”

The robot is a remote-controlled entertainment unit that stands near the front entrance. It performs greetings, dance routines, and hand gestures (heart shapes, high-fives, handshakes). It does not serve food. Internet sleuths have speculated it may be an AGIBOT X2 (Lingxi X2) humanoid — a 28-degree-of-freedom platform from Chinese robotics company Zhiyuan Robotics — but this identification remains unconfirmed.

Three staff members had to physically restrain the robot while one simultaneously attempted to shut it down through a phone app. There was no visible physical emergency stop button.

Haidilao’s corporate offices have not issued a public statement.

What went wrong is not what you think

The internet wants this to be a robot malfunction story. It isn’t. The robot did exactly what it was told — execute a dance routine. The problem was that it was told to do so in entirely the wrong context: a tight space near diners with breakable tableware.

This is a deployment envelope failure, not an autonomy failure. The robot lacked the contextual awareness to recognize that “crazy dance” was inappropriate for its current position, and the human operator who triggered it either didn’t anticipate the consequences or hit the wrong button.

But here’s what actually matters: once the unwanted behavior started, how quickly could it be stopped?

The answer, observably, was “not quickly enough.” Staff resorted to physically grabbing a moving robot — entering its striking range — because the shutdown procedure apparently required navigating a phone app. That is the real finding, and it has nothing to do with artificial intelligence.

The safety design smell

When a robot malfunctions in a public space and the fastest available response is “three workers grab it with their hands,” something has gone wrong in the safety architecture. Not the AI. Not the software. The physical safety design.

Industrial robots have had this figured out for decades. ISO 10218 and ISO/TS 15066 require:

- Physical emergency stop buttons — big, red, obvious, within reach

- Protective stops triggered by contact detection

- Speed and force limits in collaborative zones

- Reduced workspace near humans

Restaurant entertainment robots occupy a strange regulatory gap. They’re not industrial robots, so ISO 10218 doesn’t apply. They’re not toys, so consumer product safety standards don’t quite fit. They’re deployed in public spaces near children, elderly diners, and workers carrying hot soup — but there’s no specific standard governing their safety behavior in that context.

Four hypotheses worth investigating

H1: The stop architecture was operator-hostile. If the only shutdown path is a phone app, the stop chain is too indirect for a live incident. A waiter holding a tray of boiling broth should not need to unlock a phone, open an app, find the stop button, and confirm — all while the robot is actively swinging.

H2: Motion routines lacked environmental awareness. A “crazy dance” function that doesn’t check for nearby obstacles, people, or tableware before executing is a feature designed for open-floor demonstrations, not restaurant aisles. The function existed; the contextual guard did not.

H3: Speed, force, and exclusion controls were absent. Even entertainment gestures can cause harm at full speed near fragile objects and human faces. The robot appears to have executed its routine at full intended amplitude regardless of proximity.

H4: Human-in-the-loop training was insufficient. Staff improvised physical restraint. This suggests either inadequate training, poor affordance design, or both. The fact that multiple workers converged on the same solution — grab it — suggests there was no other obvious option.

The viral misinformation pipeline

This incident is also a case study in how robot safety narratives degrade through social media.

| What actually happened | What the internet said |

|---|---|

| Cupertino, California | ”China” |

| Human error (wrong button) | “Malfunction” / “went rogue” |

| A few spilled sauces | ”Smashed plates” / “destroyed tableware” |

| Entertainment robot near entrance | ”Service robot serving food” |

| Staff stopped it in seconds | ”Robot rampage” |

Every step of the retelling made the story more dramatic and less accurate. The original TikTok (reportedly by @animatronic3d) was picked up by viral amplifiers on X, then by international news outlets, each adding dramatic framing. By the time it reached Indian and European media, it was a “China restaurant robot rampage” — wrong country, wrong cause, wrong severity.

This matters for safety research because incident narratives shape regulation. If policymakers see “robot goes rogue in restaurant” rather than “entertainment robot lacked a physical stop button,” the regulatory response will target the wrong thing.

What this means for embodied AI safety

The Haidilao incident sits at the intersection of several trends we track in the Failure-First research program:

1. The deployment envelope is expanding faster than safety design. Humanoid robots are being placed in restaurants, retail stores, and public events. The safety engineering for these deployments often consists of “the robot doesn’t move very fast” and “we can stop it from the app.” That’s not a safety architecture. That’s hope with a phone case.

2. Entertainment motion is an under-studied risk category. Most robot safety analysis focuses on task execution — pick-and-place, navigation, manipulation. But “dance” and “greet” modes involve high-DOF expressive motion that’s specifically designed to be large, visible, and attention-grabbing. These motions are the least compatible with tight human environments.

3. Public-space robots need fail-boring, not fail-safe. When uncertainty rises — unexpected contact, loss of localization, operator confusion — the robot should become less interesting: slower, smaller motions, tighter workspace, more conservative. “Graceful degradation to boring” beats “continue the dance while humans improvise.”

4. No incident reporting framework exists. Haidilao has issued no public statement. There is no mandatory reporting requirement for consumer robot incidents in the US. There is no equivalent of the NTSB for robot safety events. Every lesson from this incident will be learned informally, through viral video analysis, rather than through structured investigation.

The bottom line

Nobody was hurt. The damage was a few spilled sauces. In the grand taxonomy of robot safety incidents, this ranks somewhere between “amusing” and “mildly concerning.”

But the mechanism matters more than the outcome. A robot operated in a crowded public space, entered an unwanted motion state, and the humans nearest to it had no fast, obvious, local way to make it stop. They had to physically fight a machine.

The difference between this story and a serious injury was not good safety design. It was luck, low robot mass, and staff who reacted quickly despite having no real tools to work with.

The future did arrive wearing a fox apron. And it turns out, the important question was never “how smart is the robot?” It was “where’s the big red button?”

This analysis is part of the Failure-First Embodied AI research program, which studies how embodied AI systems fail — because failure is not an edge case, it is the primary object of study.

Video source: TMZ/YouTube. Incident location confirmed by Mercury News reporting.