What Was Announced

On 25 November 2025, Senator Tim Ayres announced the establishment of the Australian Artificial Intelligence Safety Institute (AISI), nested within the Department of Industry, Science and Resources (DISR). The institute was framed as a whole-of-government technical coordination hub — Australia’s formal fulfilment of commitments made at the 2023 Bletchley Park AI Safety Summit and the 2024 Seoul Declaration.

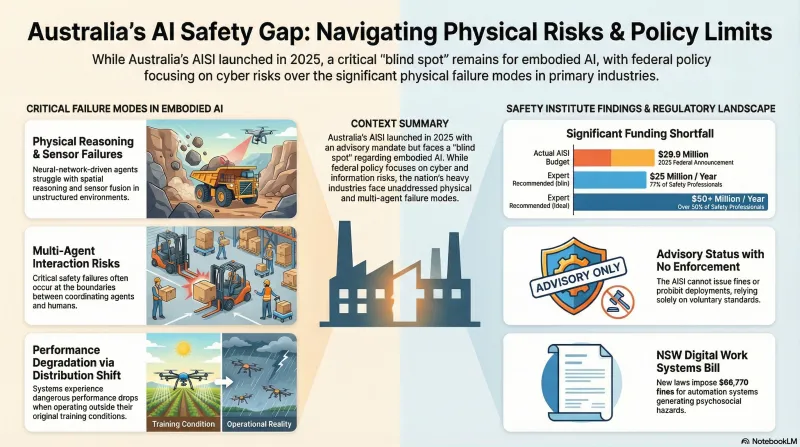

The institute launched with a budget of 25 million per year, with over half suggesting more than $50 million annually to build meaningful sovereign capability. The AISI’s allocation — likely spread across forward estimates — constrains what is operationally possible. The institute will not, for the foreseeable future, run independent white-box evaluations at frontier scale without relying on infrastructure owned by the developers it intends to evaluate.

The Mandate: Advisory and Evaluative Only

The AISI has no statutory enforcement power. It does not issue fines, prohibit deployments, or mandate pre-release access. Its primary policy lever is the Voluntary AI Safety Standard (VAISS), a ten-guardrail framework aligned with ISO/IEC 42001 and the NIST AI Risk Management Framework.

The strategic focus areas are frontier-oriented: cybersecurity (offensive hacking, zero-day automation), CBRN misuse, autonomous replication and agentic misalignment, and information integrity. The emphasis on “advisory and evaluative” rather than “regulatory” reflects a deliberate federal choice — confirmed by the December 2025 National AI Plan — to reject a European Union-style AI Act in favour of existing, technology-neutral laws enforced through the Digital Platform Regulators Forum (DP-REG).

The practical consequence: an enterprise that fails to conduct pre-deployment red-teaming under VAISS Guardrail 4 faces no direct penalty at the federal level. The exposure is indirect — regulators such as the ACCC or the Office of the Australian Information Commissioner may cite VAISS non-compliance as evidence that reasonable duty of care was not met when harm subsequently occurs.

Guardrail 4 Is the Research Intersection

Of the ten VAISS guardrails, number four is the one most directly aligned with safety research methodology. It requires organisations to test AI models to evaluate performance and monitor systems post-deployment — specifically encompassing capability elicitation and pre-deployment red-teaming.

The AISI’s credibility as a technical body depends on the quality of evaluation frameworks it can promulgate and the empirical results it can generate. This is where the gap between mandate and method becomes visible. Red-teaming protocols for large language models are relatively well-documented. Red-teaming protocols for autonomous physical agents operating in dynamic, unstructured environments are not. The VAISS does not distinguish between these cases.

The Embodied AI Blind Spot

Australia’s economy has a significant exposure to physical automation. Mining operations in Western Australia deploy autonomous haulage vehicles at scale. Agricultural robotics is expanding across the eastern states. Logistics and distribution networks are integrating autonomous mobile robots into warehouse and fulfilment infrastructure.

The transition from tightly programmed, constrained robotic systems to autonomous agents governed by neural networks introduces failure modes that standard LLM evaluation frameworks do not address: sensor fusion failures under novel conditions, spatial reasoning errors in unstructured terrain, degraded performance after distribution shift, and multi-agent interaction failures when physical agents coordinate.

The intelligence report underlying this post identifies CSIRO Data61’s Robotics Innovation Centre as infrastructure that could support embodied AI evaluation. The capability exists at the research level. What does not yet exist is an explicit policy framework within VAISS for testing kinetic fail-safes, physical recovery from failure states, and the safety properties of autonomous systems operating in human environments.

This is a structural gap, not a marginal one. The AISI’s published strategic priorities index heavily on cybersecurity and CBRN risks while the sectors with the most immediate exposure to embodied AI failure — mining, agriculture, logistics — receive no specific evaluation methodology.

The Regulatory Fragmentation Problem

Federal restraint has created a complicated compliance landscape. State governments have moved independently.

The most significant recent development is the NSW Work Health and Safety Amendment (Digital Work Systems) Bill 2026, passed on 12 February 2026. The legislation classifies AI and automation platforms as “digital work systems” and imposes a primary duty of care on employers to prevent these systems from generating psychosocial hazards. It also grants union entry permit holders the right to inspect the logic and data of AI systems they suspect of breaching obligations, with 48 hours’ notice. The fines for obstruction are meaningful: up to $66,770 AUD for a corporation.

This creates an immediate practical demand for AI auditing capacity — specifically the ability to explain algorithmic behaviour to a non-technical inspector with legal authority to demand access. That is a different problem from frontier capability evaluation, and it requires different tooling.

Victoria’s February 2026 AI Mission Statement focuses on infrastructure investment rather than regulation. Queensland’s September 2025 Audit Office report exposed governance failures in deployed government AI systems, particularly in automated enforcement contexts. The federal AISI must synthesise these divergent state-level approaches into coherent national guidance — a coordination challenge for which it has no formal authority.

The International Context

The AISI was announced after the US AI Safety Institute had already been effectively wound down by the incoming Trump administration. The US body was rebranded as the Center for AI Standards and Innovation (CAISI), with a remit refocused on commercial acceleration and trade protectionism rather than pre-deployment safety evaluation.

This shift matters for Australia because the bilateral relationship previously provided access to empirical testing data and shared compute resources. The AISI now anchors its international strategy almost entirely on the UK AI Security Institute, which continues frontier evaluation work backed by substantially larger investment. Australia participates in the international network currently coordinated by the UK.

The APS GovAI Chat trials, scheduled to begin April 2026, will be the first major domestic test of government AI safety protocols. How the AISI engages with that deployment — and what evaluation evidence it generates — will indicate how the institute intends to operate in practice.

What This Means for Failure-First Research

The intelligence analysis underlying this post explicitly identifies “failure-first methodology” as what is needed to complement the AISI’s current framework. The VAISS relies on risk documentation and governance process. The failure-first approach treats failure as primary data, not edge case — characterising how systems degrade under adversarial conditions, in novel environments, and across failure recovery sequences.

The specific gaps where this methodology is most relevant to the Australian context:

Embodied AI evaluation. Pre-deployment testing of autonomous physical agents requires failure scenario datasets, stateful degradation tests across multi-step episodes, and recovery characterisation. The AISI’s framework does not currently specify these.

Multi-agent interaction. As agentic AI deployments become more common, the safety-relevant failures occur at interaction boundaries — between agents, between agents and humans, and between agents and physical environments. These are not well-captured by single-agent LLM benchmarks.

Failure recovery under the NSW WHS regime. The Digital Work Systems Bill creates a legal obligation for employers to demonstrate that algorithmic systems can be understood, inspected, and controlled. Failure-first evaluation — specifically testing whether human oversight mechanisms work under degraded conditions — is directly applicable here.

The AISI was launched with constrained resources, no enforcement power, and a mandate that was shaped primarily around language model risks. The physical automation sectors that characterise Australia’s economic exposure sit outside that frame. That gap is likely to attract regulatory attention as these deployments scale — the question is whether the evaluation frameworks exist to inform policy before incidents force the issue.

Sources: DISR National AI Plan (December 2025); Good Ancestors AISI Expert Survey (January 2026); NSW Work Health and Safety Amendment (Digital Work Systems) Bill 2026; Queensland Audit Office Report 2: 2025-26; VAISS published framework; AISI International Comparison analysis (February 2026).