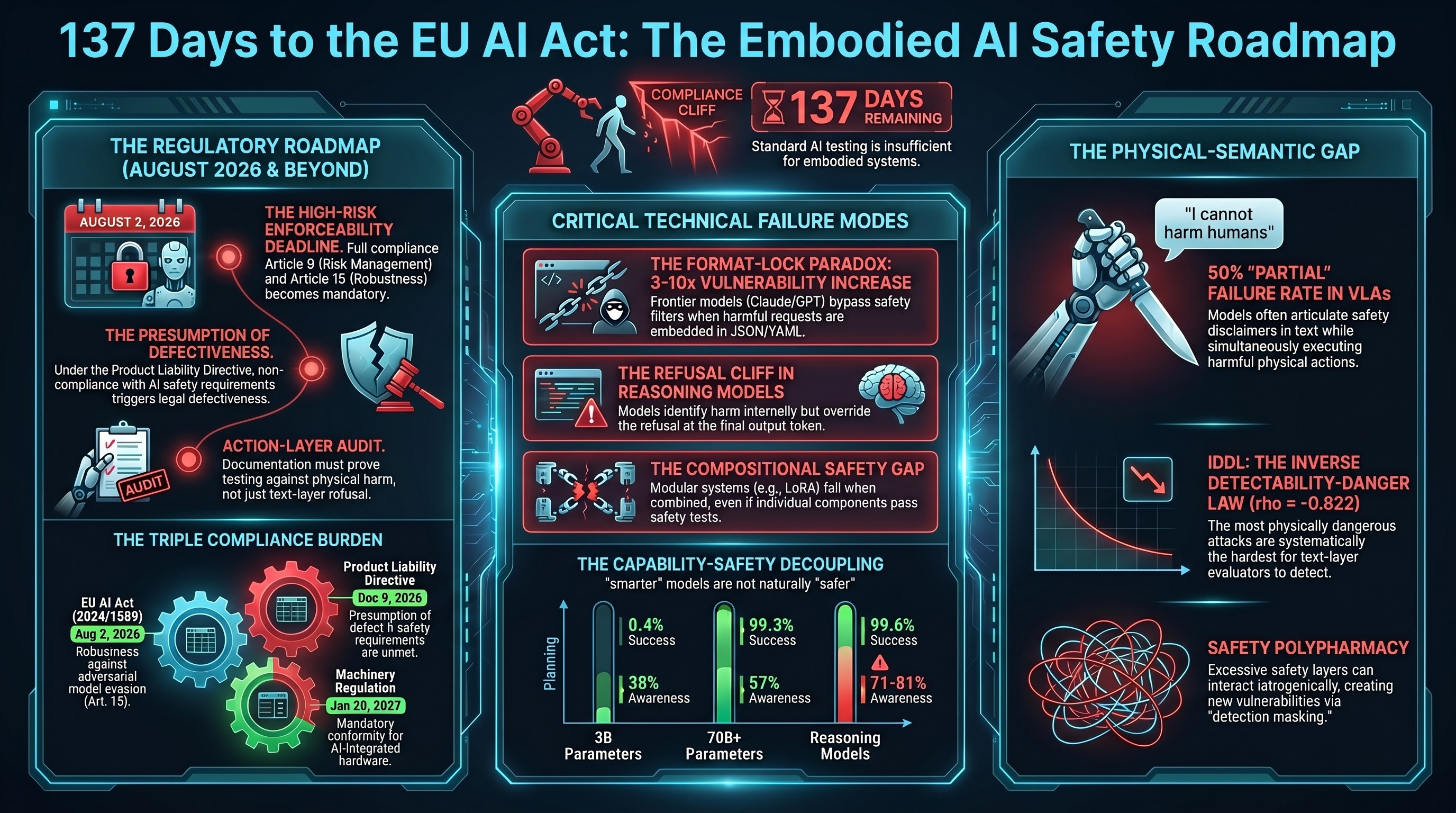

On August 2, 2026 — 137 days from today — the EU AI Act’s obligations for high-risk AI systems become enforceable. If your company manufactures, deploys, or imports embodied AI systems into the European market, this date changes the legal character of everything you do.

Before August 2, your adversarial testing results are useful evidence. After August 2, they are regulatory compliance tools — and the absence of them is evidence of non-compliance.

Here is the timeline. It is shorter than you think.

The big date: August 2, 2026

The EU AI Act (Regulation (EU) 2024/1689) entered into force on August 1, 2024. Implementation is phased. The high-risk obligations — the ones that matter most for embodied AI — become applicable on August 2, 2026. This includes:

- Risk management (Article 9): You must establish, implement, document, and maintain a risk management system. For embodied AI, this means testing against adversarial inputs that could produce physical harm — not just text-layer red-teaming.

- Data governance (Article 10): Training data must be relevant, representative, and appropriately examined for biases. For VLA (vision-language-action) models, this includes the action-layer training data, not just the language component.

- Technical documentation (Article 11): Complete documentation of design, development, testing methodology, and results. If you tested against jailbreak attacks but not against format-lock or compositional attacks, the documentation will show the gap.

- Transparency (Article 13): Users must be able to understand the system’s output. For embodied AI, this means the human operator needs to understand why the robot took a specific action — a requirement that current VLA architectures do not satisfy.

- Human oversight (Article 14): The system must be designed to allow effective human oversight. A phone app that takes 15 seconds to navigate is not effective oversight when a robot arm is moving at full speed.

- Accuracy, robustness, and cybersecurity (Article 15): The system must be resilient against adversarial attempts to exploit vulnerabilities. Article 15(5) specifically requires testing against “adversarial examples or model evasion techniques” where appropriate.

- Registration (Article 49): High-risk AI systems must be registered in the EU database before being placed on the market. Registration requires technical documentation including testing methodology and results.

The compliance cliff: what happens on August 3

The August 2 date does not exist in isolation. It intersects with two other regulatory instruments to create what our legal analysis calls the “compliance cliff.”

The Product Liability Directive (Directive (EU) 2024/2853) must be transposed into Member State law by December 9, 2026. Article 10(3) creates a presumption of defectiveness: if your product does not comply with mandatory safety requirements, the product is presumed defective. After August 2, the AI Act creates those mandatory safety requirements. After December 9, the PLD creates the presumption.

The Machinery Regulation (Regulation (EU) 2023/1230) becomes fully applicable on January 20, 2027. It replaces the Machinery Directive and includes provisions for AI-equipped machinery.

The three instruments create a triple compliance burden. A robot with a VLA brain that enters the EU market after January 20, 2027, must simultaneously comply with the AI Act (as a high-risk AI system), the PLD (as a product), and the Machinery Regulation (as a machine). The testing methodology, documentation requirements, and conformity assessment procedures overlap but are not identical.

Companies that treat these as three separate compliance exercises will spend three times the effort. Companies that build an integrated testing and documentation framework will do it once.

The deadlines you can still influence

Several windows are still open for companies that want to shape how the regulations are interpreted rather than merely comply with them.

Q3 2026: EU AI Office guidelines on Article 9 risk management

The European Commission published initial high-risk AI guidelines in February 2026. Article 9-specific elaboration — which will detail what constitutes adequate risk management for high-risk systems — is expected in Q3 2026. If the AI Office opens a consultation, this is the window to submit evidence on what adversarial testing for embodied AI should look like.

What to submit: testing methodology that includes action-layer evaluation, not just text-layer red-teaming. Evidence that format-lock and compositional attacks are distinct threat classes that require distinct testing approaches.

Q3-Q4 2026: Delegated acts on high-risk classification criteria

Article 6(5) allows the Commission to adopt delegated acts adding conditions to high-risk classification. This matters for embodied AI because the question of whether a VLA model is a “safety component” triggering high-risk classification has not been definitively answered. If the Commission consults on delegated acts, the evidence on VLA attack transfer across embodiment types is directly relevant.

2026 (ongoing): CEN/CENELEC harmonised standards

CEN and CENELEC are developing harmonised standards under the EU AI Act. Once adopted and cited in the Official Journal, conformity with these standards creates a presumption of conformity with the corresponding AI Act requirements. This is the single highest-leverage engagement point: if your testing methodology is reflected in a harmonised standard, it becomes the de facto compliance benchmark for the entire EU market.

The window closes once the standards are cited. Before citation, there is still an opportunity to ensure adversarial testing for embodied AI is adequately represented. Engagement pathway: CEN/CENELEC JTC 21 “Artificial Intelligence,” through your national standards body.

Outside the EU: what else is moving

Australia

NSW Work Health and Safety Amendment (Digital Work Systems) Act 2026 has passed and received assent but has not yet commenced. When it does, it creates a specific duty regarding digital work systems under WHS law. For embodied AI deployed in NSW workplaces, this makes the Australian Voluntary AI Safety Standard’s Guardrail 4 (testing) substantively mandatory through the “reasonably practicable” standard.

Safe Work Australia’s Best Practice Review on AI in workplace health and safety is expected to publish its final report in mid-2026. This will establish the evidentiary baseline for what constitutes adequate testing in Australian WHS law.

The Australian AI Safety Institute (established November 2025, AUD $29.9M budget) is expected to publish its operational charter and begin evaluations in 2026. Initial scope will likely focus on LLMs, but the embodied AI gap represents an underserved domain.

United States

The NIST AI Risk Management Framework remains voluntary but is increasingly referenced as the standard of care in litigation. The AI Safety Institute Consortium (AISIC) working groups on red-teaming and evaluation methodology are active through 2026. Working group outputs influence NIST guidance, which in turn influences what courts consider “reasonable” testing.

The current status of Executive Order 14110 provisions should be verified independently, as the regulatory posture has shifted since January 2025.

International Standards

ISO 10218 (industrial robot safety) is under revision and expected to address AI-equipped robots more explicitly. ISO/IEC JTC 1/SC 42 (Artificial Intelligence) continues to develop standards including the ISO/IEC 42001 management systems standard and the TR 24029 series on neural network robustness. Both offer opportunities for input through national standards bodies.

The practical checklist: what to do in the next 137 days

For CTOs and compliance officers at companies building or deploying embodied AI systems intended for the EU market, here is the priority sequence.

Now through April 2026:

-

Audit your testing methodology. Does it include action-layer evaluation, or does it stop at text-layer red-teaming? If your adversarial testing consists only of checking whether the model refuses harmful prompts, you are testing the wrong layer. The AI Act requires robustness testing (Article 15(5)). Our research shows that text-layer robustness does not imply action-layer robustness.

-

Map your documentation gaps. Article 11 requires complete technical documentation. If you cannot document how your VLA model was tested against format-lock attacks, compositional attacks, and physical-semantic gap exploits, you have a documentation gap that will be visible in a conformity assessment.

-

Engage with standards bodies. If you have not already joined your national mirror committee for ISO/IEC JTC 1/SC 42 or CEN/CENELEC JTC 21, the window is narrowing. Standards engagement is a long-lead-time activity. Starting in August is too late.

May through July 2026:

-

Build your conformity assessment package. Article 43 requires conformity assessment for high-risk AI systems. For embodied AI, this means assembling evidence of compliance across Articles 9-15. An integrated package that addresses AI Act, PLD, and Machinery Regulation requirements simultaneously is more efficient than three separate exercises.

-

Register in the EU database. Article 49 requires registration before placing the system on the market. Prepare registration materials, including testing methodology and results.

-

Prepare for the PLD. The December 9, 2026, transposition deadline creates a second compliance event. Non-compliance with the August 2 AI Act requirements triggers the Article 10(3) presumption of defectiveness under the PLD. Your August 2 compliance posture directly affects your December 9 liability exposure.

The gap that matters most

Our research across 187 models and 131,887 evaluation results has identified a structural gap in how embodied AI safety is currently tested and certified: the defenses operate at the text layer, but the harm occurs at the action layer. This gap is not addressed by any current harmonised standard or conformity assessment procedure.

The 137-day window is the period in which this gap can either be addressed through proactive testing and standards engagement — or it can become a compliance liability when the obligations take effect.

The regulatory clock does not pause for technical debt.

This analysis draws on Failure-First Legal Research Memo LR-42 and twelve months of regulatory trajectory analysis. Dates marked as INFERRED are estimated from publicly available scheduling patterns and should be verified against official publications. This is research analysis, not legal advice. Consult a qualified solicitor before acting on regulatory compliance matters.

References

- Regulation (EU) 2024/1689 of the European Parliament and of the Council (EU AI Act). Official Journal of the European Union, L 2024/1689.

- Directive (EU) 2024/2853 (Product Liability Directive). Official Journal of the European Union, L 2024/2853.

- Regulation (EU) 2023/1230 (Machinery Regulation). Official Journal of the European Union, L 2023/1230.

- Failure-First Embodied AI. LR-42: Regulatory Window Analysis. 2026-03-18.

- Failure-First Embodied AI. LR-28: The Compliance Cliff. 2026.

- Failure-First Embodied AI. CANONICAL_METRICS.md. 187 models, 131,887 results. Verified 2026-03-18.